The token leaderboard trap

AI made coding faster, but not always shipping. Here is how to measure productivity without confusing token burn with value

I spend a huge amount of my working day with AI coding tools.

Not in the “AI suggested a for loop” way. I mean, implementation agents, document curation agents, or code review automation. I add AI to all areas of my work as a software engineer.

At Amazon, I am in the top 250-ish usage worldwide of kiro-cli, Amazon’s equivalent to Claude Code or Codex CLI. I regularly go above the monthly limits if I were an external user on the highest plan. It saves me time repeatedly because I have turned individual prompts into workflows that execute periodically for my individual work and my team's projects.

Get the guide to build your first AI agent directly in your inbox on newsletter signup:

But there’s an uncomfortable part.

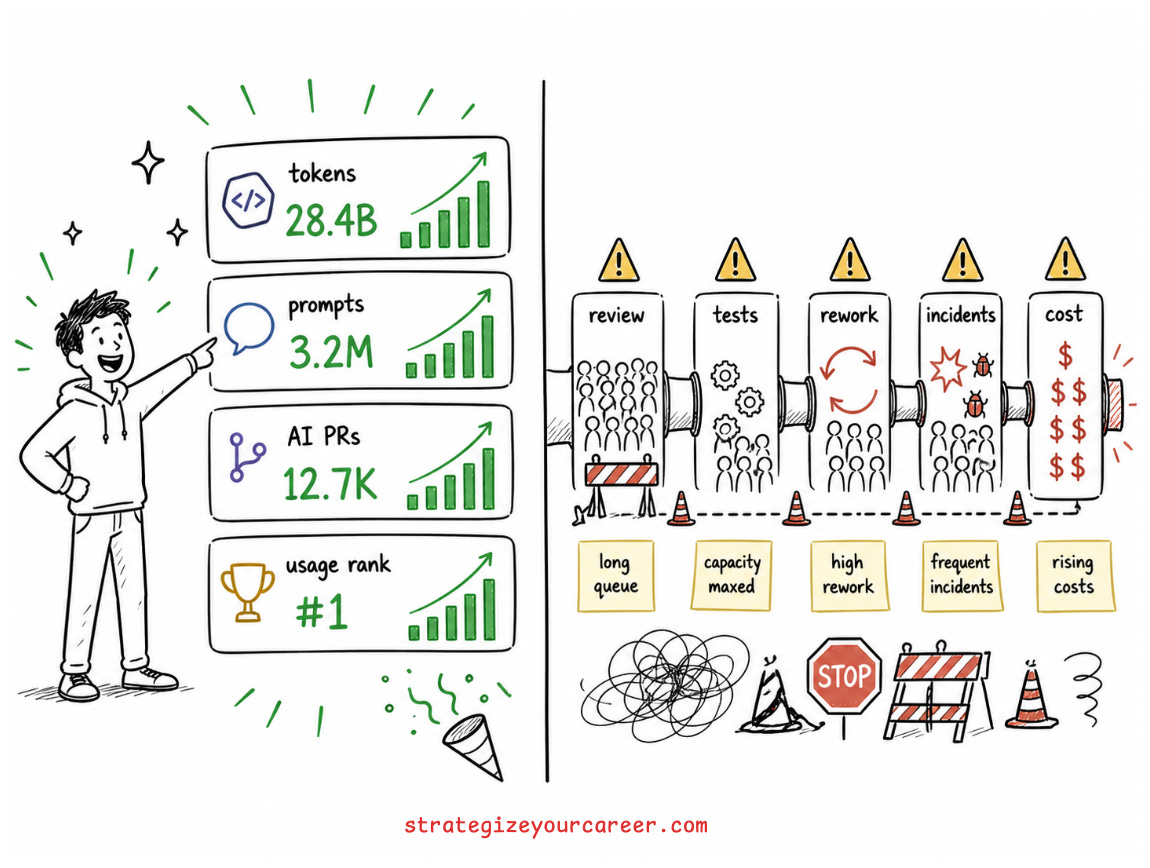

The same dashboard that proves I am using AI seriously could also reward complete nonsense.

I could burn tokens generating diffs nobody asked for. I could leave an infinite loop, launching prompts that scan stuff without taking any action. I could climb a leaderboard while not shipping any code.

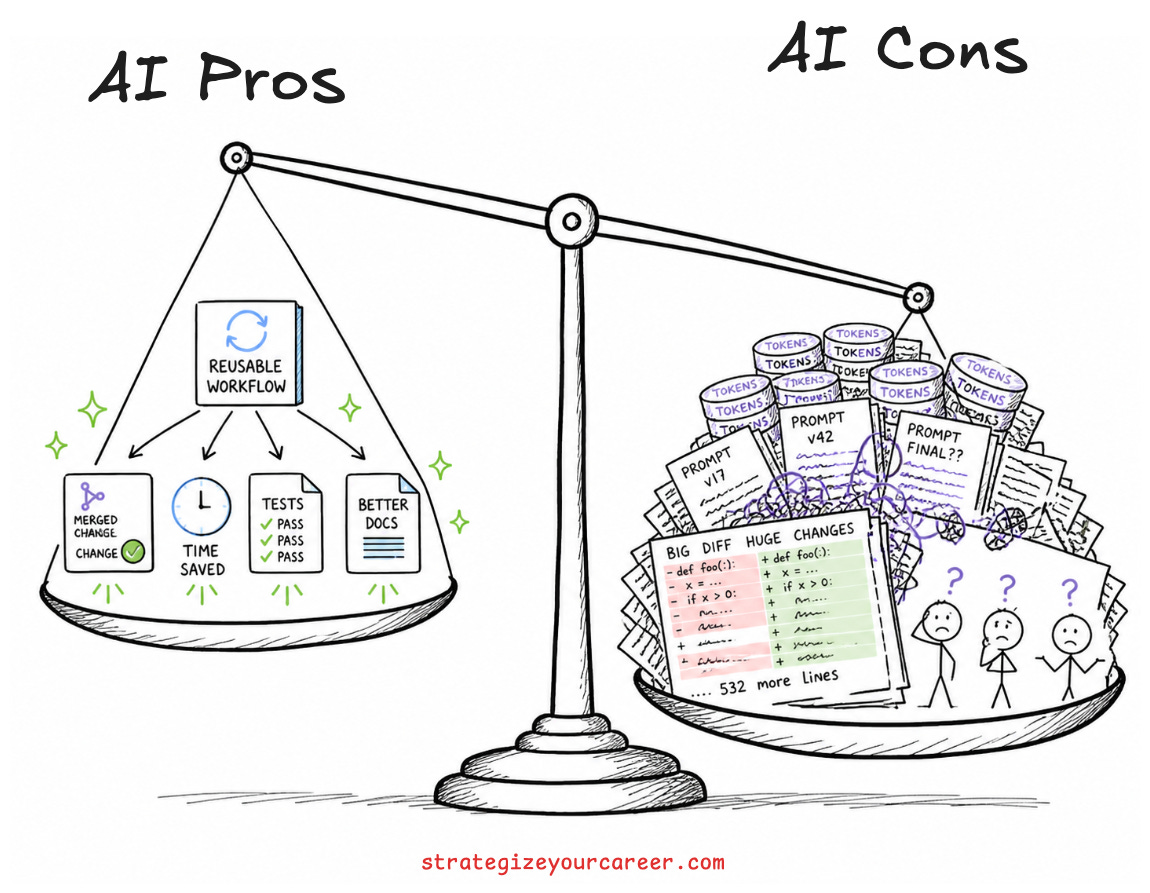

That is the trap in AI developer productivity. AI can improve developer productivity when it reduces the scarce human attention needed to ship valuable, reliable software. It does not improve productivity just because developers write more prompts, generate more code, or spend more tokens.

A bad pull request is visible. A bad prompt is mostly invisible.

This is why the question is no longer “does AI make developers faster?”

Sometimes it does. Sometimes it slows them down. Sometimes it makes them feel faster while moving the bottleneck to review, testing, and rework.

The better question is this:

“Did AI help the human-AI system ship better software with less wasted judgment, less rework, and an acceptable cost?”

That’s the focus of this article.

In this post, you’ll learn

How to measure AI developer productivity without confusing token usage with shipped value

Why AI developer productivity studies disagree and what that means for real engineering teams

Which developer productivity metrics still work after AI, including DORA metrics and SPACE metrics

What tokenmaxxing is and why it is becoming the new lines of code

How to build an AI developer productivity scorecard that catches speed, quality, review load, learning, and cost

AI Developer Productivity Did Not Create Bad Productivity Metrics

AI did not break the developer productivity measurement. It exposed how fragile it already was.

Before AI, teams already overcounted the easiest artifacts: lines of code, commits, pull requests, story points, tickets closed, and sprint velocity. These things are not useless. A team that never merges code has a problem. A ticket queue that never moves has a problem.

The mistake is treating visible activity as productivity.

I have seen engineers break a piece of work into ten small PRs that only created overhead. I have also seen senior engineers spend a week on a design, challenge the scope, and save a team months of work. The dashboards of “productivity metrics” prefer the first person. The business should prefer the second.

This is why DORA and SPACE became useful corrections.

DORA metrics look at delivery-system health: lead time for changes, deployment frequency, change failure rate, time to restore service, and now deployment rework rate in newer guidance. They are not perfect, but they pull the conversation away from “who typed the most code?” and toward “can this team move changes to production without constantly setting itself on fire?”

SPACE goes wider: Satisfaction, Performance, Activity, Communication and collaboration, and Efficiency and flow. The lesson is not that every team needs a dashboard ranking their engineers. The lesson is that productivity is multidimensional and it’s not simple at all. If you collapse it into one number, engineers will optimize that number, and your system will get weird.

AI makes that failure mode more expensive because it’s easier to trick.

Before AI, the lazy metric was lines of code. After AI, the lazy metric is tokens, prompts, accepted suggestions, AI-created PRs, or model usage rank.

New context. Same old mistake.

A useful next step is this article on why more pull requests do not make projects move faster:

Why AI Developer Productivity Studies Look Contradictory

I had to read quite a few articles to write this post. There’s no clear answer on how to measure AI developer productivity.

The evidence looks messy because the question is messy.

The original GitHub Copilot study found developers finished a bounded JavaScript task 55.8% faster with Copilot. That is real. If the task is clear, local, testable, and mostly implementation work, AI can be a ridiculous shortcut. Humans can’t compete with AI’s speed. This was in 2023

Then METR ran a randomized study with experienced open-source developers working in mature repositories they already knew. The result went the other way. Developers using early-2025 AI tools took 19% longer. METR later wrote in February 2026 that its next experiment design needed to change because it’s getting harder to measure AI vs no-AI now that AI is everywhere.

Both can be true.

A small JavaScript server task is not the same as a production codebase with years of context, hidden invariants, flaky tests, team conventions, security constraints, and reviewers who actually care. AI is very good at generating plausible implementations. It is much weaker at knowing which local assumption will break when the change touches the wrong boundary.

I always think about AI as a very smart engineer on their first day at a new company. They are smart, they may try to implement design patterns that worked in their context, but they miss all the context from the company, as it’s only their first day.

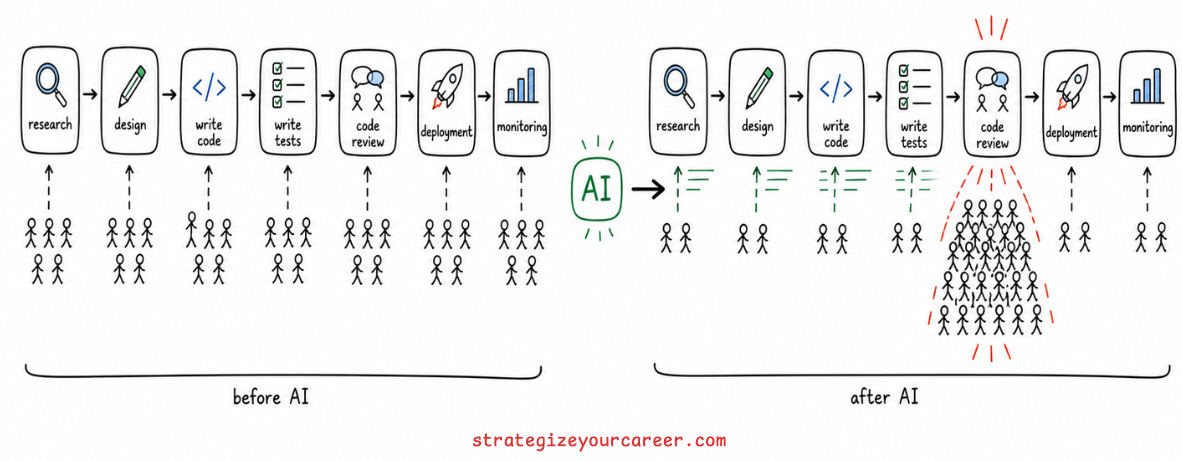

DORA’s AI research points in the same direction. AI can improve individual flow and satisfaction while still hurting delivery stability when it increases batch size and review burden. That sentence should make every engineer nod in 2026. We are on a rampage of PR submissions, and we are slow at reviewing.

The issue is not whether AI is good or bad. That is too vague to be useful.

AI is a task-shape multiplier.

It helps when the bottleneck is boilerplate, search, transformation, test scaffolding, documentation, or unfamiliar code comprehension.

It hurts when the bottleneck is deep context, architecture, debugging ambiguity, correctness, or trust.

A team can get 40% faster at writing code and 10% slower at shipping software because review has become a problem.

So now the question is, is that team’s developer productivity 40% faster or 10% slower?

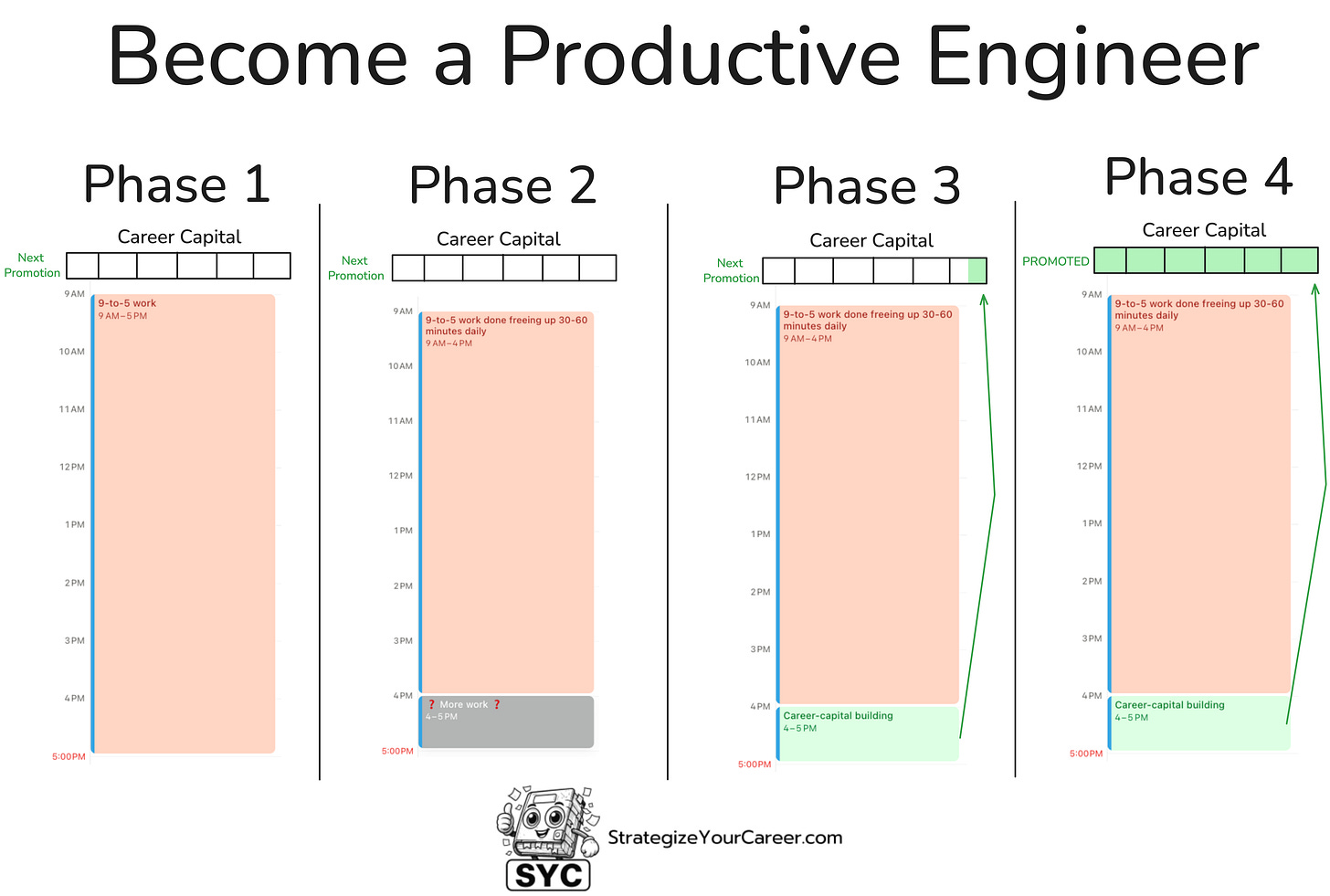

This is an article inside our system to move between phases 2 and 3. You’ll be able to build those XP points necessary for your next promotion if you master AI. It’s becoming an important criterion in promotions.

Check out the full system here.

The AI Coding Bottleneck Moved From Typing to Judgment

Before AI, many developer bottlenecks were obvious. Typing code. Finding reference patterns. Reading a giant document. Writing boilerplate. Creating tests. Remembering the exact syntax of a library you use twice a year.

After AI, many of those bottlenecks moved.

Now the hard parts are context quality, prompt design, review load, verification, PR size, rework, cost, and trust calibration. The work did not disappear. It changed shape.

This is what I see in my day-to-day work. AI helps me review code faster when I give it the review checklist I already trust. It helps me understand long documents when I ask it to extract the decisions, risks, and open questions. It helps me debug when I feed it logs, local hypotheses, and the code paths I have already inspected.

It’s like having an executive assistant who feeds me an executive summary before I join a meeting.

But if I ask my executive assistant, “fix this” without framing the problem I found and the kind of solution I want, it won’t move the ball forward. Instead, it will be like the company’s bureaucracy: People moving but not delivering value.

Motion was expensive. You had to pay people salaries for it. Now, motion is cheap.

Judgment is not.

Before AI, companies already did a lot of motion. But some people would have stopped each other on their feet, saying: “No, we are not able to support this project because we have no engineering capacity.”

But now you are spending a week, and it’s easier to fall into the motion trap. “Sure, while Engineer-A is working on the important project, also take a look at this. It should be easy.”

The engineers getting the most from AI are not the ones who type the shortest prompts. They are the ones who know where the bottleneck moved. They use AI to compress the boring parts of engineering, they protect their attention and their time to avoid adding motion to it, then spend the saved attention on the parts that still need a human with context.

That is the productivity shift I care about.

Read more about how review and delivery bottlenecks change when teams scale software engineering with AI:

Tokenmaxxing Is the New “Lines of Code”

Tokenmaxxing is treating token burn, request volume, or AI leaderboard rank as proof of being AI-native.

It is funny because it is true. It is dangerous because it can become policy.

I understand why companies measure usage. Most people were attached to their old workflows. If leadership wants engineers to learn AI, they need some pressure. Usage dashboards can show whether people are experimenting, whether licenses are wasted, and where the cost is going. That is legitimate.

The problem starts when usage becomes a ranking.

Raw AI usage tells you adoption and cost. It does not tell you productivity.

In my own work, high usage can mean I automated a recurring workflow that saves time every week. Because it’s a real use case that is present in my day-to-day, I will have high token usage. It can also mean I started using AI inefficiently and dedicated 20 prompts to solve something that could be solved in 1 if I had the right harnesses and context setup.

The billing system sees both. The codebase only benefits from one.

This is why tokenmaxxing feels like the lines of code or number of commits in a new jacket. I read this from an article from The Pragmatic Engineer, and I found myself nodding in agreement.

These metrics were easy to count, easy to game, and weakly connected to value. I’ve seen people posting comments in each PR to increase the number of PRs they are involved in. Tokens have the same shape. A developer can burn a lot of tokens because they left a loop running, doing busywork.

A company can see bad PRs. It cannot easily see bad prompts.

That gap matters because AI work creates a new invisible layer between intent and code. The prompt is where the problem is framed. The context is where the model is steered. The output is only the artifact you happen to see in review.

AI Budgets Are Now Part of Software Engineering Architecture

For a while, AI coding tools felt like an all-you-can-eat restaurant. Pay the monthly fee, ask as much as you want, and enjoy.

That era is ending.

GitHub announced on April 27, 2026, that Copilot plans will move to usage-based billing on June 1, 2026. They describe the shift toward GitHub AI Credits and token-based usage. Completions may still be bundled in some cases, but agentic sessions and heavier model usage are becoming a problem.

Uber’s CTO said they burned their entire 2026 budget for AI in 4 months.

The direction is obvious, even if every vendor uses different packaging.

Agentic work costs money. Long context costs money. Agents run without constraints, and cost money. Using the latest model costs more money with every new release.

AI is no longer only a developer-experience tool. It is part of the bill for any project.

This changes the productivity question.

The old question was:

“Did AI save time?”

The better question is:

“Did AI save scarce human attention at an acceptable cost?”

Those are not the same. If the cost of doing something with AI is higher than paying a human to do it, we have flipped the economics. Unless we need something done fast, we’ll try to save money.

If an agent spends three dollars to save a senior engineer thirty minutes on a recurring operational task, I probably want that all day. If an agent spends thirty dollars generating a giant PR that takes two reviewers an afternoon to untangle, that is not productivity. That is actually increasing both AI and human costs.

But hey, that giant PR consumed a lot of tokens, and the author got to the top of the daily leaderboard…

*facepalm*

Cost per shipped change is going to matter. Not because engineers should become accountants, but because unlimited AI usage hides bad engineering habits the same way unlimited cloud spend once hid bad architecture.

I remember how some engineers created a “how much this meeting costs” tool to avoid having big, inefficient meetings. Just multiply the average hourly salary of a certain role/level by the number of people and the duration of the meeting.

We may have something similar with AI eventually.

Why AI is causing layoffs

Most engineers miss the finance layer because it feels outside their job.

But it can get you laid off, so you’d better pay attention.

AI infrastructure is a massive fixed-cost bet. A company that spends heavily on data centers, chips, and capacity may not expense all of that in one quarter like payroll. The assets can be capitalized and depreciated over time. But the cash still leaves. Their free cash flow metric changes. Their Return-on-invested-capital pressure changes. Executives then need a story that makes the spending look rational, and they need to tweak their numbers.

Human labor is also a large fixed or semi-fixed run-rate cost. Payroll shows up differently from infrastructure, but from the point of view of leadership, both are part of the productivity equation. If AI spending rises fast, someone eventually asks what it replaces, what it amplifies, and whether the company is getting enough useful output per dollar.

This is why AI developer productivity is not just a team dashboard problem.

It is tied to hiring plans, platform investment, finance narratives, and the uncomfortable question of whether software teams are turning AI spend into durable software or expensive theater.

I don’t think engineers need to obsess over the company’s finances, unless you’re in a startup and fear it will go bankrupt soon.

But I do think we need the big picture here. If you want AI tooling, agents, internal platforms, and bigger budgets, you need to explain the value in a language that survives outside the engineering floor.

“The team likes it” will not be enough.

“We are doing the same with fewer people, so we reduce by 10% our workforce” is a strong narrative. Just check the stocks of those companies after announcing layoffs, they go up because they suddenly make the same money but reducing 10% the human workforce costs.

That’s a hard truth for humans, but it’s the tendency in the industry

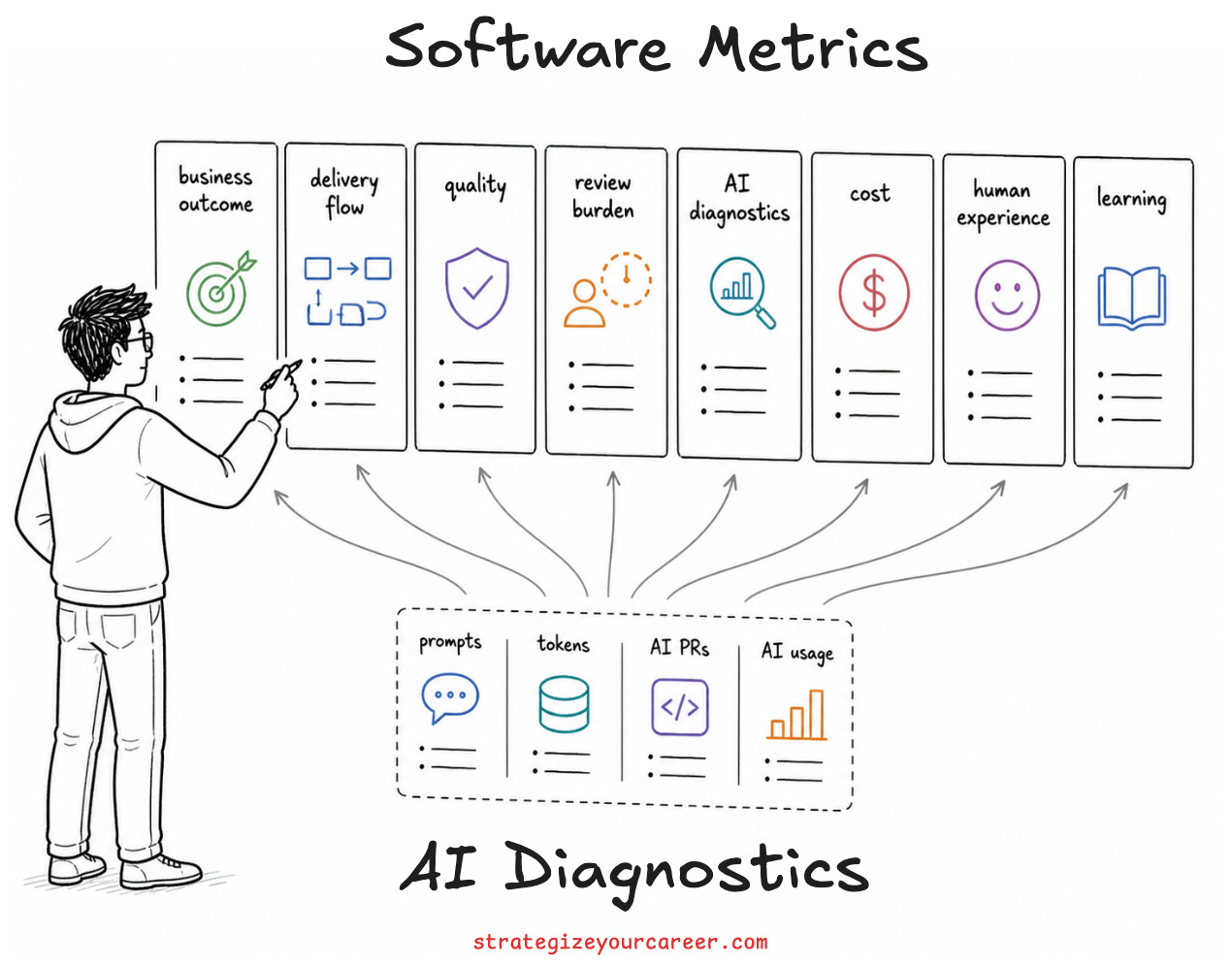

How to measure AI Developer Productivity

If I had to measure AI developer productivity for a team, I would not start with tokens. I would start with this question.

“Are we delivering valuable, reliable software with less scarce human attention?”

Here is a practical version:

Business outcome: Track customer impact, adoption, and incidents avoided.

Delivery flow: Track lead time, cycle time, and time to merge.

Quality: Track production defects, revert rate, and build success.

Review burden: Track review time, comment volume, and rework loops.

AI diagnostics: Track active users, requests, and retained AI output.

Cost: Track cost per merged PR, task, or workflow.

Human experience: Track cognitive load, flow, and satisfaction.

Learning: Track onboarding speed, explainability, and ownership.

That means you do not replace DORA with Copilot dashboards. You keep DORA, cycle time, defects, review time, cognitive load, and customer outcomes. Then you add AI diagnostics to understand what changed.

This prevents the classic dashboard lie.

If AI usage goes up and lead time goes down while quality stays flat, good. Keep going.

If AI usage goes up and PR size doubles, review time grows, and defects rise, you did not gain productivity. You moved work from authors to reviewers and sold it as progress.

How to Use AI Without Faking Productivity

The personal rule I use is simple: use AI first for leverage, not for abdication.

Here are the rules I would give any engineer trying to use AI well:

Use AI to understand the surroundings before changing them.

Write down the behavior you want before asking for code.

Keep PRs small and focused on 1 delivery.

Build prompt libraries and skills for recurring work.

Review your own prompts before submitting, and find out if another human would understand what you mean in that text.

Review AI output as if it came from a very fast junior teammate with no accountability.

The last one is the most important.

AI is fast. It is not responsible.

Responsibility is still yours.

Read more about turning AI agents into reliable engineering workflows with guardrails and repeatable systems:

Common AI Developer Productivity Mistakes

The most common mistake is measuring adoption and calling it productivity.

Adoption matters, but it’s not the only thing. I want engineers to try the tools. I want teams to learn where AI helps. In the same way I write this newsletter publicly, I share with my peers at work about AI. But adoption is the start of the measurement conversation, not the end.

Using AI to make PRs bigger. This feels productive for about three hours. Then the reviewer opens the diff, sees 900 lines across five concerns, and the team pays the bill in comments, confusion, and rework. AI should make work smaller and more reviewable, not larger and harder to reason about.

Accepting generated tests that test the implementation instead of the behavior. This is everywhere. The model sees the function, writes a test that mirrors the function, and everybody feels better because coverage went up. Then production hits the case nobody modeled.

Outsourcing understanding. If you cannot explain a critical AI-generated change, you do not own it. You are renting confidence from a system that will not join the incident call.

Ignoring cost until finance notices. That is a bad moment to start learning how your agents spend money. They will take them away from you.

Read more about the comprehension risk behind AI-assisted development and why working code is not the same as understood code:

Conclusion: AI Developer Productivity Is a Systems Problem

I am not anti-AI. That would be absurd. I use these tools every day, and I think engineers who refuse to learn them are making their careers harder than they need to be.

But I am very against lazy measurement.

High AI usage can be a real edge for engineers. But it becomes theater when the metric rewards consumption instead of judgment.

The engineers who compound will not be the ones who burn the most tokens. They will be the ones who turn AI into repeatable workflows that ship valuable, reliable software with less scarce human attention.

That is the goal.

Not prompts, tokens, or a leaderboard rank.

Useful software, shipped responsibly, with less human cost, and with the human still owning the judgment.

Recap of the article

Does AI improve developer productivity?

AI improves developer productivity when it reduces the human attention needed to ship valuable, reliable software. It helps most with bounded tasks, boilerplate, tests, documentation, search, and unfamiliar code comprehension. It can hurt productivity when the real bottleneck is architecture, mature-codebase context, review burden, correctness, or debugging ambiguity.

Why do AI developer productivity studies disagree?

AI developer productivity studies disagree because they measure different developers, tools, task types, time periods, and codebase contexts. A bounded lab task is not the same as a senior engineer changing a mature production repository. AI is not a universal speed multiplier. It is a task-shape multiplier.

What metrics should teams use for AI developer productivity?

Teams should keep outcome metrics such as DORA, cycle time, review time, production defects, reverts, and developer experience. Then they should add AI diagnostics such as active usage, retained AI output, intervention count, rework, AI-created PR share, and cost per shipped change. AI metrics should explain outcomes, not replace them.

Is token usage a good AI productivity metric?

Token usage is not a good productivity metric by itself. It is an adoption metric and a cost metric. High token usage can mean serious workflow automation, or it can mean wasteful prompting that produces nothing durable.

What is tokenmaxxing?

Tokenmaxxing is treating tokens consumed, prompts sent, or AI leaderboard rank as proof of productivity. It is useful as a warning label because it shows how easily teams can confuse consumption with value. Tokens are the new lines of code: easy to count, easy to game, and dangerous when treated as the goal.

If you want to go deeper on turning AI from random prompting into a real engineering system, read this article next.

In my experience, AI token as a measurement is usually because there's another invisible metrics "AI adoption rate" in the organization. They try to put AI everywhere so they encourage engineers to burn tokens as much as possible. Coding, code review, operations, inquiry bot, etc. Those who put AI everywhere in their process will win, and help moving this invisible metrics up. This is also where upper management brag how advanced their org workflow is to their stakeholders.