Harness Engineering: Turning AI Agents Into Reliable Engineers

Learn how harness engineering uses deterministic scripts, guardrails, and state management to turn AI coding agents into production systems that ship real code.

Most AI coding agents can write impressive demos. Few can ship production code without breaking everything around it. The difference is harness engineering: the discipline of building systems that make AI agents reliable.

Here is how I used it to ship 100+ PRs/month at Amazon

Get the free AI Agent Building Blocks ebook when you subscribe:

I’m Fran. I’m a software engineer at Amazon during the day, and I write and experiment with AI during the night.

I want to tell you about the moment I realized that prompting alone would never work for production AI agents.

I was working on an automation project at Amazon. The goal was simple: update large JSON configuration files automatically based on requirements. These configs were thousands of lines long, and the updates followed predictable patterns.

A perfect job for an AI agent, right? That’s what everyone thought. Engineers on the team opened their AI-powered IDE or CLI, typed their prompts to modify the JSONs, and watched the LLM struggle to modify the target node correctly.

It failed to implement the changes properly. Every single time.

The model wasn’t broken. We were on Opus 4.6 with a one-million context window.

The context window was a problem. When you feed multiple 10,000-line JSON files into an LLM, the model loses track of the surrounding structure. It edits what you asked it to edit, but it quietly breaks everything around it. No error message. No warning. Just a structurally invalid file that passes a surface-level glance but fails in production.

This is not a model quality problem. It is an environment problem. And the fix is not a better prompt.

You may think the fix is Anthropic to release a 10M context window, but we know that a bigger context window still degrades after 100k or 200k tokens.

The real fix is a harness.

Harness engineering is the discipline that turned my broken prototype at Amazon into a system that now ships over 100 PRs per month. Fully autonomous.

I wrote a 10-step guide to build that agent in this previous post:

In this post, you’ll learn

What harness engineering is and how it differs from prompt engineering, context engineering, and agent engineering

Why AI agents fail on large structured files like JSON, and how to fix it with deterministic scripts

The four pillars of a production AI harness: state management, context architecture, guardrails, and entropy management

How I built a harness at Amazon that ships 100+ PRs/month without human intervention

The mindset shift that separates engineers who demo AI from engineers who deploy it

Why AI Agents Fail on Large Files

Most engineers today interact with AI coding tools the same way: open Cursor, Claude Code, or Codex, type a prompt, review the output, repeat. For small files and isolated tasks, this works beautifully. But the moment the problem involves a large amount of files, the whole approach falls apart.

Large Language Models are probabilistic engines. They predict the next token based on patterns in their context window. When the context window is filled with thousands of lines of structured data, the model’s attention gets diluted. It correctly identifies the node you want to modify, but it loses track of sibling keys, nested brackets, and structural integrity. The result is a file that looks right at the point of change but is broken somewhere else.

We have to understand that Context Window isn’t the same as Context Attention. As a human, I can store hundreds of items in a storage unit, but I will remember about a fraction of the items I have there.

Same with LLMs. Performance degrades as the context window gets filled (and costs).

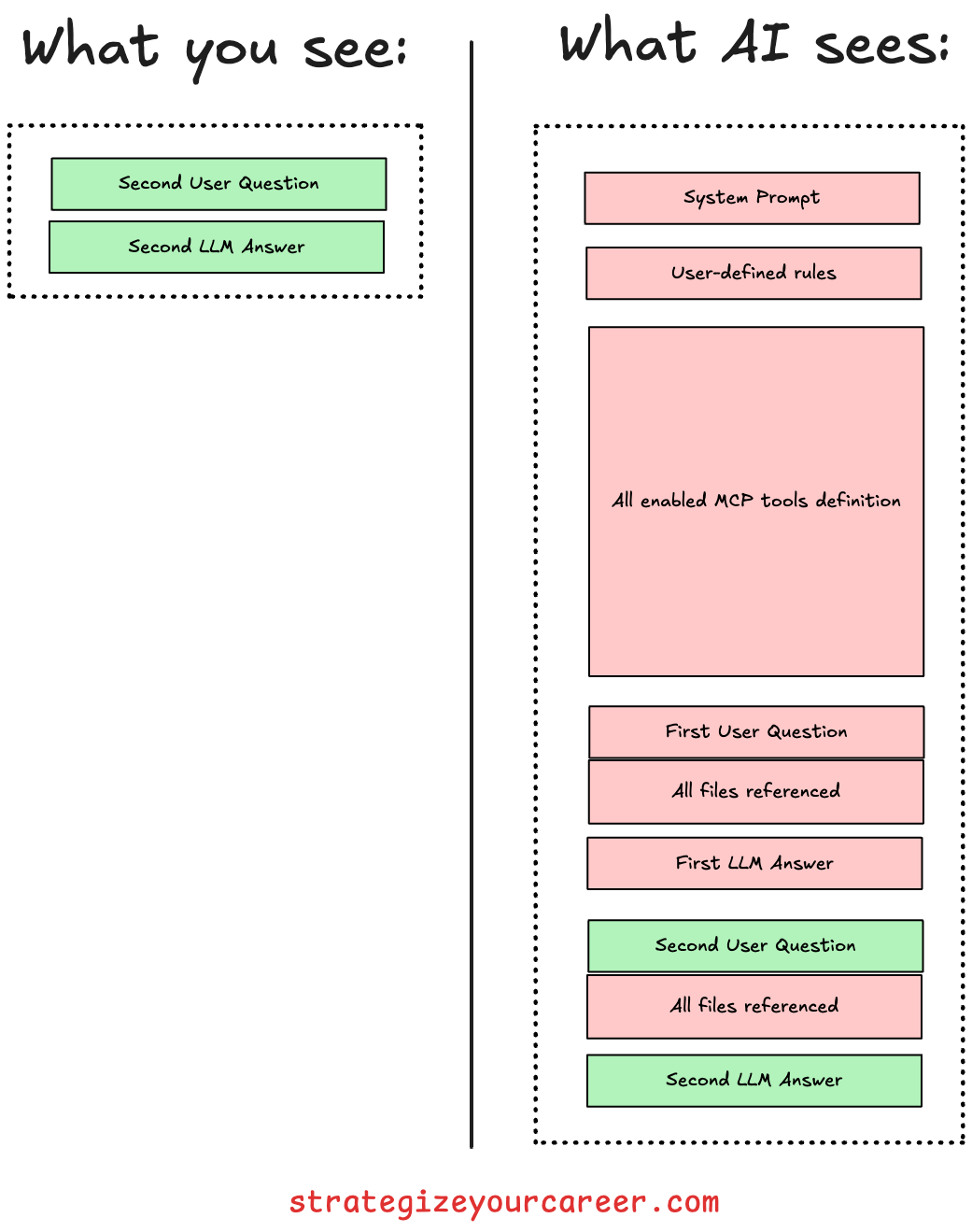

Did you know that every message you send is sending all the previous conversation in an API call? Yes, you’re billed also for those past messages. The servers in the cloud don’t keep any state, they only have a cache.

When the model fails to make an update, the instinct is to write a better prompt.

Add more constraints.

Tell the model to “preserve the surrounding structure.”

“Make no mistakes.”

But that is like asking someone to juggle while blindfolded and then giving them more detailed instructions about hand positioning. The problem is not the instructions. The problem is the blindfold.

The context window itself becomes a liability when it’s packed with thousands of lines of repetitive structure. No prompt can fix that.

I covered in this post how to scale AI setting up guardrails

What Is Harness Engineering?

Harness engineering is the discipline of designing the systems, architectural constraints, execution environments, and automated feedback loops that wrap around AI agents to make them reliable in production.

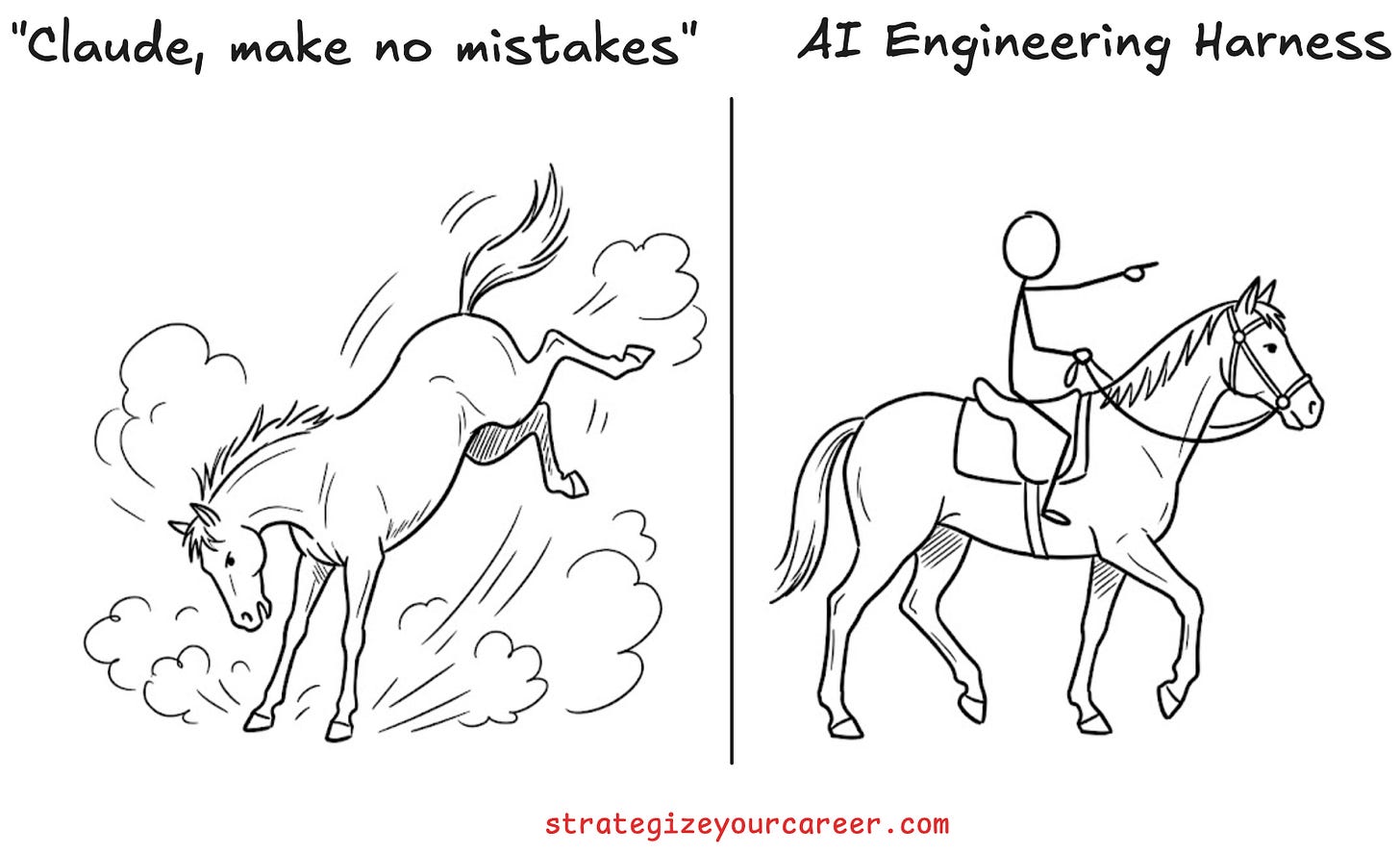

The term was first coined by Mitchell Hashimoto, the founder of HashiCorp. The metaphor comes from horse riding. Think of the LLM as a powerful horse. It has raw energy, speed, and strength. But without reins, a saddle, and a bridle, that energy is undirected and potentially destructive (the horse kicks you, the LLM runs a rm -rf, and I don’t know which is worse). The harness allows the rider to direct the horse’s power productively.

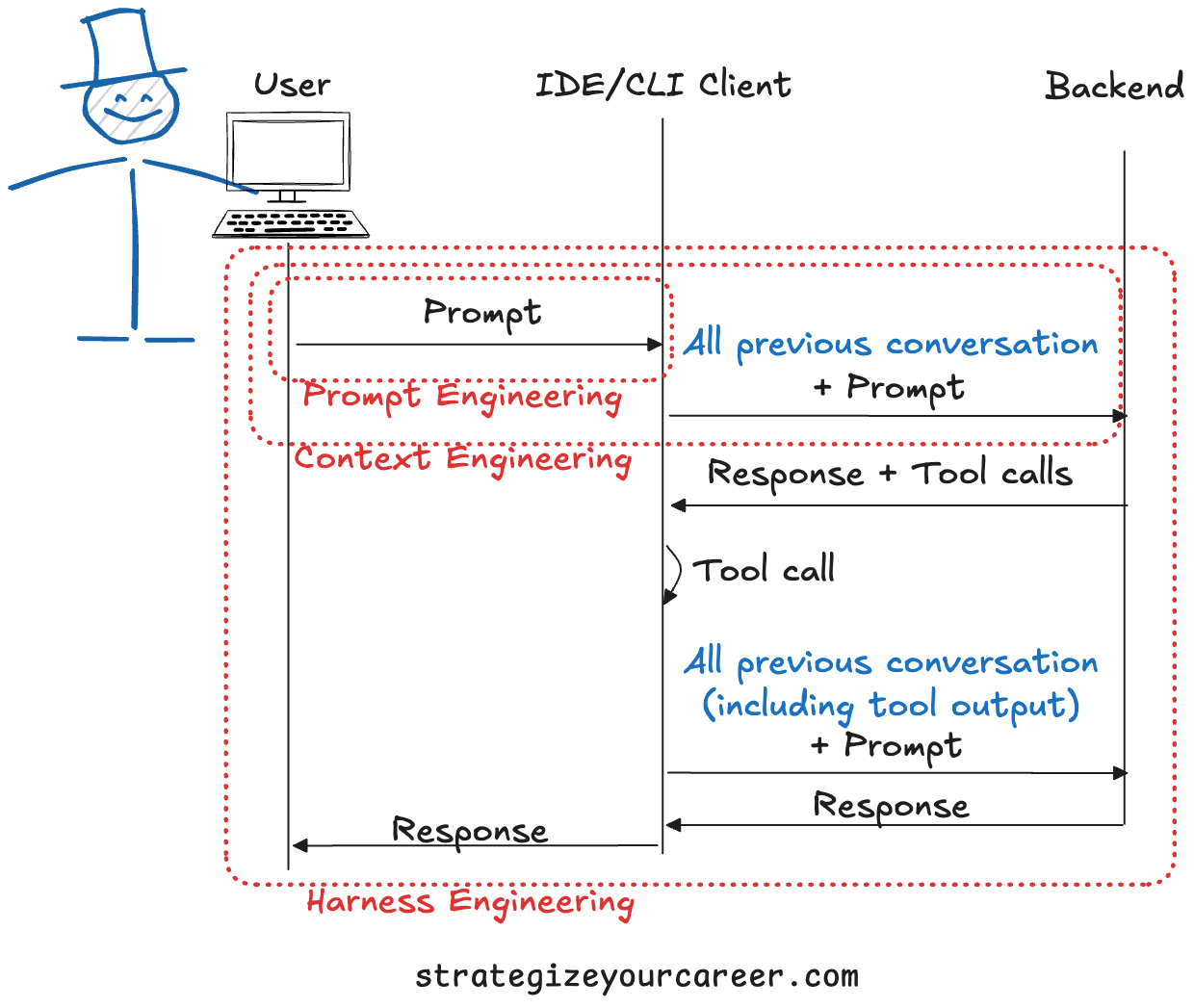

To understand where harness engineering fits, here’s how it relates to the other disciplines you’ve probably heard about:

Prompt Engineering → Single interaction to craft the best input to the model (single request-response interaction).

Context Engineering → Control what the model sees during a whole session (multiple interactions until clearing).

Harness Engineering → Designs the environment, tools, guardrails, and feedback loops (multiple sessions).

Agent Engineering → Design the agent’s internal reasoning loop (define specialized agents).

Platform Engineering → Infrastructure to manage deployment, scaling, and cloud operations (where agents can run).

Prompt engineering is about what you say to the model.

Context engineering is about what the model sees.

Harness engineering is about the entire world the model operates in. It includes the tools the agent can call, the constraints it cannot violate, the documentation structure it reads, and the automated feedback loops that catch its mistakes before they reach production.

How I Built a Harness That Ships 100+ PRs/Month at Amazon

Let me walk you through the specific problem I solved, because abstract talk about agents only becomes useful when you see them applied to a real constraint.

The problem: We had large JSON configuration files that needed automated, repetitive updates. These files were too big for the LLM’s context window. Every manual update was tedious, error-prone, and time-consuming.

What everyone else tried: Engineers on the team opened their IDEs and started prompting. The LLM would correctly modify the target node, but would fail to identify which other files had to be updated, and it would fail to keep the correct JSON structure. There was no awareness of JSON structural integrity as a hard constraint. Every run was a coin flip. Sometimes it worked. Most times it broke. You can’t trust an AI like this.

The harness approach: Instead of trying to update the prompt, I narrowed the problem to one specific operation: How to read and write into our JSON files. I wasn’t trying to build a general-purpose agent. I built a scoped one. I wrote deterministic Python scripts to handle the actual JSON surgery: read the file, apply a precise modification, validate the structure, write it back. The agent’s only job was to provide the intent, the what, and the where. The script provided the execution guarantee.

The key insight was this: the agent calls the script as a tool. It does not generate JSON directly. It tells the script what to change, and the script changes it with zero ambiguity. This means the AI is the brain that chooses which steps to take, like a CEO indicating directions. The AI didn’t have to make the groundwork itself.

I then added a structural validation step as a guardrail. If the resulting JSON is malformed, the agent cannot proceed. It physically cannot ship a broken config. This provides a feedback loop, which is something managers and C-level executives also want when delegating to humans.

The result: 100+ PRs per month. Zero structural corruption. Fully autonomous. The system has been running for months, and after a few weeks of tweaking edge cases in the deterministic scripts, the Agent nails the updates.

At some point, we realized the only reason a PR gets rejected is that the requirement was wrong, not because the AI didn’t execute the requirement.

That’s when you are into something good.

If you want to build agents that ship production code instead of only doing demos, the paid section that follows breaks down the exact harness framework I use: state, context, guardrails, and entropy control.