How to scale a relational database

Relational DBs struggle at scale. This guide helps you choose the right strategy, from indexing to sharding, without overengineering too soon.

Get the free AI Agent Building Blocks ebook when you subscribe:

Relational databases give us power, structure, and consistency.

But when they start groaning under scale, it’s easy to make the wrong call, like over-indexing without measuring, or sharding too early.

In the past couple of years, I’ve worked on database migration infrastructure, failover testing, and performance debugging across several teams.

What I’ve learned is this: scaling is less about clever tricks and more about knowing your workload, picking the right trade-off, and understanding your system's weakest link.

⭐ In this post, you'll learn

When to use vertical scaling before diving into distributed complexity

Which low-effort improvements give high impact (like indexing and caching)

How to scale reads and writes independently with replication and sharding

Techniques for managing large datasets with partitioning and archiving

How to choose the right strategy using a practical decision framework

🏗️ #1 Introduction: why scaling relational databases is hard

Relational databases are great until they aren't. They work well out of the box for most startups, but as load increases, you hit limits fast. Especially with traditional RDBMS like PostgreSQL or MySQL, which were never built for massive scale or multi-region writes.

You can throw more hardware at the problem (vertical scaling) or split data across machines (horizontal scaling). Both options come with cost and complexity. Scaling decisions also affect your reliability, performance, and how much your team can actually maintain long term.

Say your PostgreSQL instance starts timing out during peak hours. Replication? Indexing? Sharding? This post will help you pick the right next move.

📈 #2 Vertical scaling: the obvious but limited option

Vertical scaling is simple. Add more CPU, memory, or SSDs to your database server. It usually works—until it doesn’t.

If CPU is maxed, bump the instance size. If IOPS are the problem, switch to provisioned volumes. The upside: no app changes. The downside: there's always a ceiling. And the bill is more expensive each time.

I’ve seen teams delay proper fixes for too long by relying on vertical scaling. Once, I had a PostgreSQL with queries taking too long. One could have thought having a more powerful instance would help, but the existing instance was already underutilized. The root issue was inefficient query plans and missing indexes for expensive queries.

Use vertical scaling early on. But as traffic grows or your dataset gets larger, it becomes a temporary band-aid rather than a long-term fix.

🧮 #3 Indexing: the low-hanging fruit of performance

Indexes are often the fastest way to fix performance without touching code. They work by creating shortcuts in how the DB finds data.

You’ve got options: B-tree (default), hash, GIN for full-text search, BRIN for time-series data, partial indexes to cover specific cases. I’ve had cases where adding a simple index on user_id reduced join latency by 10x. Some DBs like PostgreSQL even allow you to create functional indexes, like for example indexing a column in lower_case when the original column isn't lower_case, but some query pattern uses the LOWER() function

But there’s no free lunch. Indexes slow down writes and increase storage. They also need maintenance. On my team last year, we noticed a couple of indexes were never used. We were able to remove them after revisiting our query patterns.

Use indexes when read latency is your problem and the access pattern is predictable. Avoid blindly indexing every column—monitor query plans first.

📚 #4 Replication: scaling reads and ensuring high availability

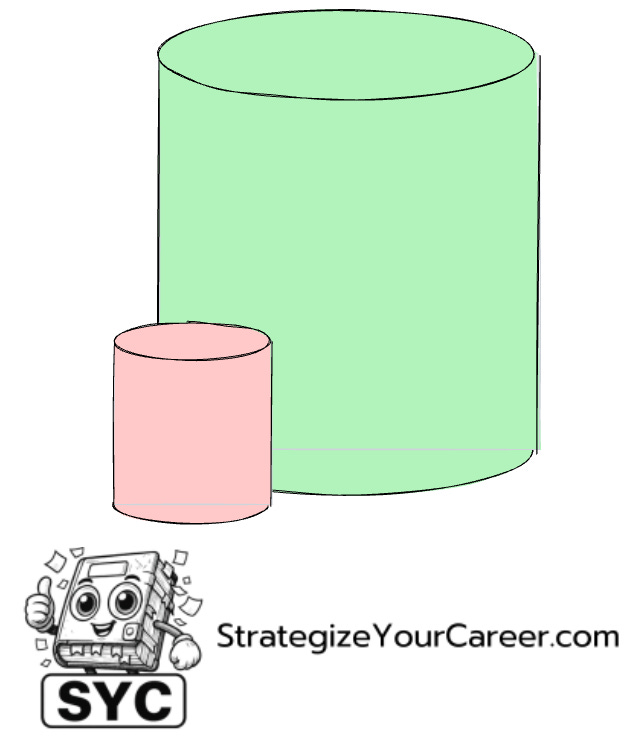

Replication lets you offload reads and improve availability. You copy data from a primary to one or more replicas. There are different modes—async, sync, semi-sync—and they each trade consistency for performance.

Think of your primary as a master chef. The replicas are assistants duplicating their work. It helps you serve more customers, but they might get the orders a few seconds late.

It's not only about scaling, it's also about reliability. Testing instances failover last year, I found ways to cut failover from 45 minutes to 3 minutes by having a read replica ready to become the leader the moment the leader crashed. You never want your writer instance to crash... until it does and you'd rather have 3 minutes downtime instead of 45.

Replication helps in read-heavy workloads and HA setups. Just remember: writes still go to the primary, and replication lag can lead to stale reads. Design accordingly.

🧠 #5 Caching: reducing DB pressure with faster intermediaries

If replication handles read scale, caching handles read frequency. It’s about storing hot data closer to the user, often in memory with Redis, Memcached, or AWS DAX.

This is where you want to know if you have more reads or writes. If you are read-heavy, you can cache this data because it barely changes.

But caching is a trap if you don’t think through invalidation. You could end up with user-facing bugs because the TTLs weren’t tuned or updates didn’t invalidate correctly.

Use caching when the data changes slowly and read throughput matters. Be explicit about how it gets refreshed, and what happens on cache misses.

🚀 Learn even faster

Want a higher salary, more free time, and faster learning?

This newsletter is your shortcut.

👉 Join the 19,000+ engineers already increasing salaries, saving hours, and learning more in less time. Paid subscribers also get these resources and learning tracks.

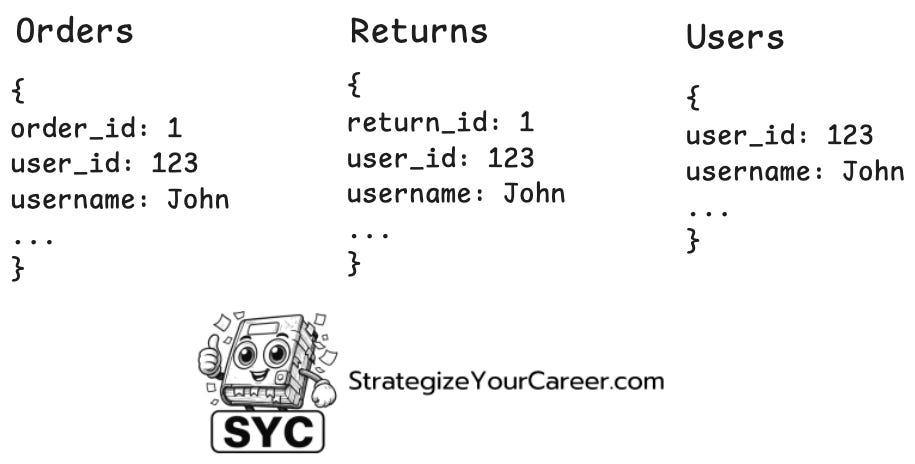

🧾 #6 Denormalization: trading purity for performance

Denormalization is about duplicating data across tables so you avoid joins at read time. It’s a tradeoff: speed in exchange for data duplication.

This works well for analytics, dashboards, or anything where performance matters more than consistency. But you have to think through update paths carefully.

Only denormalize when it’s backed by clear ownership and strong write paths. Don’t do it just to “make queries easier”.