Why Open Source Maintainers Are Done With AI Slop

AI made PRs, issues, and security reports cheap. Maintainer trust, review time, and ownership stayed expensive.

Open source feels broken.

One developer can generate a pull request in minutes.

One security researcher can generate a scary vulnerability report in minutes.

One person can open an issue, write a reproduction that looks technical, add a long explanation, and make it sound serious enough that a maintainer has to stop and look.

But the maintainer may need hours to verify it.

That is the part AI did not automate away.

Get the guide to build your first AI agent directly in your inbox on newsletter signup:

I have felt both sides of this at work. AI has made my reviews faster when I use it well. I can ask it to inspect a targeted file, check a specific assumption, compare behavior against tests, or formalize a concern I already have. That is useful. It helps me understand the code better.

But the same tool becomes harmful when it produces long generic comments nobody owns. It can challenge every aspect of a PR, and with words that sound correct, but have no depth. It can generate a fix that compiles while missing the architecture. It can create the feeling of progress while moving the real work to someone else.

That is what is happening with AI and open source.

AI is not killing open source as an idea, a license model, or a community. But it is breaking the old assumption that the effort required to submit code, issues, and security reports naturally protects maintainers’ attention.

Open source did not run on code alone.

It ran on trust.

In this post, you’ll learn

How AI open source contributions are changing maintainer trust and project governance

Why AI-generated PRs create more review work for open-source maintainers

How AI security reports are forcing projects like curl and Node.js to gate vulnerability channels

Why open code does not always mean open maintainer attention

How to make responsible AI-assisted open-source contributions that maintainers can trust

Is AI killing open source?

No, AI is not killing open source.

It is killing the old contribution model.

Open-source licenses are not disappearing. Public code is not becoming useless. Communities, shared infrastructure, reusable libraries, transparent development, and public issue trackers still matter. In fact, AI depends on all of them.

But the old contract is breaking.

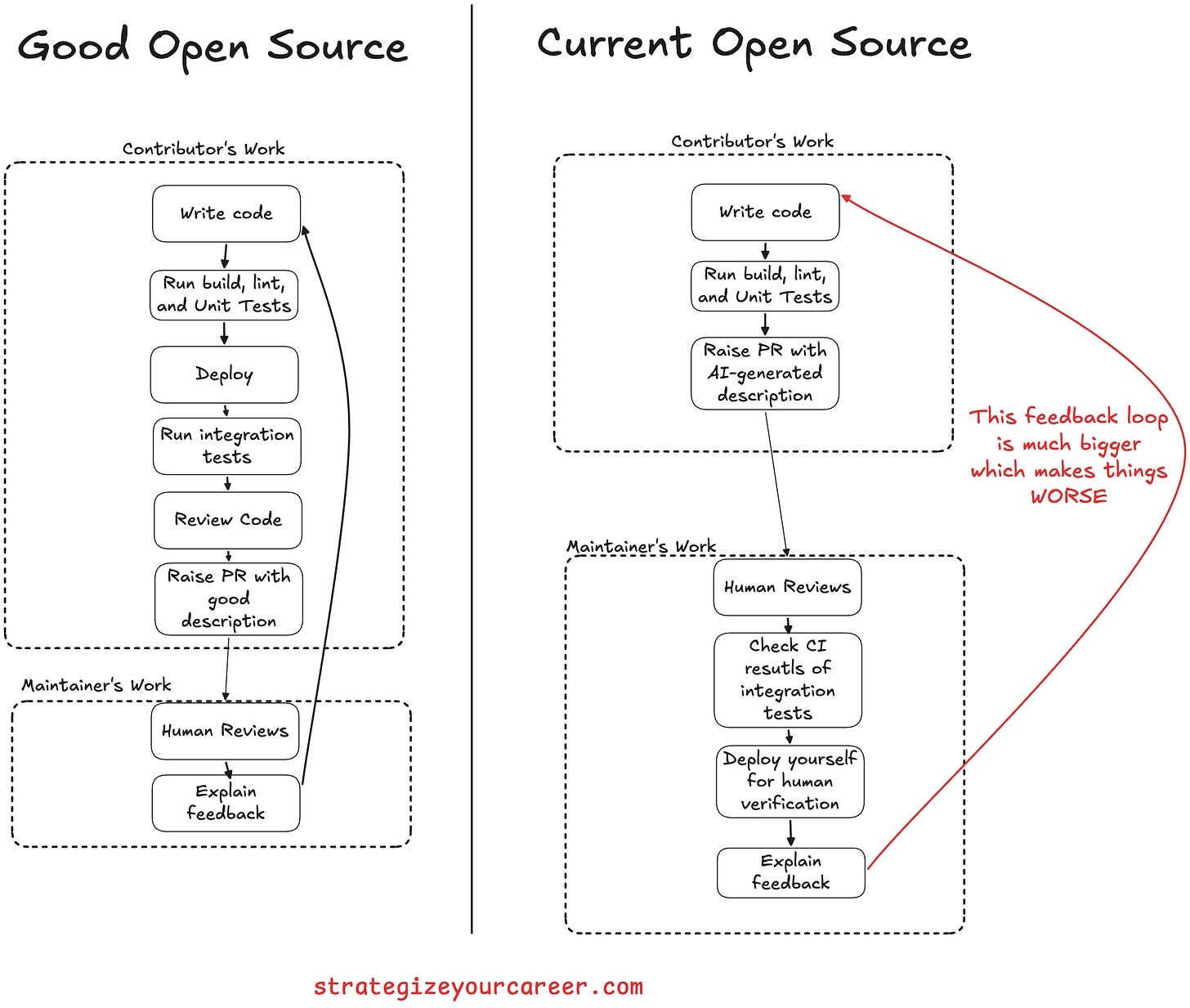

For years, open source worked because contributing required effort. A meaningful PR, a useful issue, or a serious vulnerability report took enough work to signal that the person submitting it probably understood something. The effort was on the author of the PR. That friction protected maintainers.

AI removes that protection.

Now, a plausible PR can be generated without understanding the code.

The question is not whether open source survives.

It will.

The question is whether the old model of open contribution survives.

I don’t think so

The moment generating code is cheap, that effort is passed to the maintainer.

AI increased the supply of code faster than it allowed maintainers to triage contributions. AI didn’t increase the supply of trust yet.

That is the real open-source problem: AI is not killing open source. It is killing open contribution as we knew it.

The old open-source deal depended on contribution friction

The old open-source deal was never just “send a PR.”

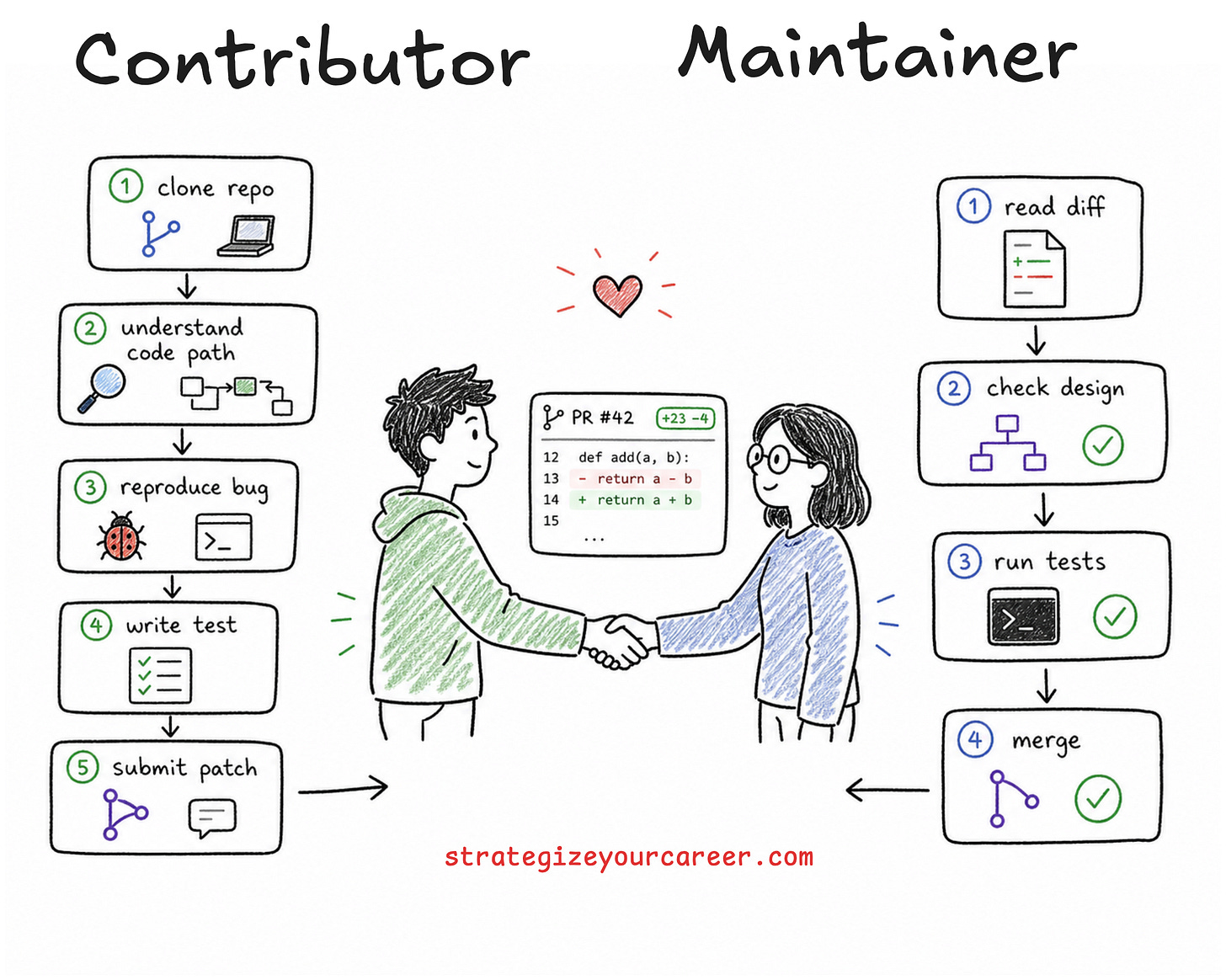

It was closer to this: you spend effort understanding the project, you submit a change, and the maintainer spends attention reviewing it. If the contribution is good, the maintainer not only accepts a diff. They are making a small bet on you as a future participant.

That bet made sense when creating a useful PR required work. You had to clone the repo, understand the code path, reproduce the problem, make the change, run tests, and explain why it mattered. The effort was not perfect proof of quality, but it filtered out some low-intent contributions.

You needed skin in the game. You had to care.

AI changes the signal. A contributor can now produce something that looks like effort without doing the underlying thinking. The artifact looks serious, but the author didn’t make the effort of asking if this is the right thing. It’s just a thing that compiles and passes tests. Just because something doesn’t mean it’s a good thing.

This is why the phrase “AI slop” keeps showing up in maintainer discussions. The problem is not that AI touched the work. The problem is that the work arrives without ownership.

I have seen this in smaller forms in code review in my team. AI can copy an existing pattern and apply it to the wrong abstraction. It looks reasonable because it looks like other code we have in the repo, but it doesn’t mean it’s the right thing.

The code works.

The mental model and architecture fall apart.

That is manageable when you are iterating with your own AI agent. Ask AI, AI generates, I review, I prompt again to make sure the output aligns with mental model of what I think is right. It becomes expensive when I hand the first AI output to someone else and ask them to review and figure it out.

Read more about why AI-generated code becomes risky when engineers lose ownership of the output:

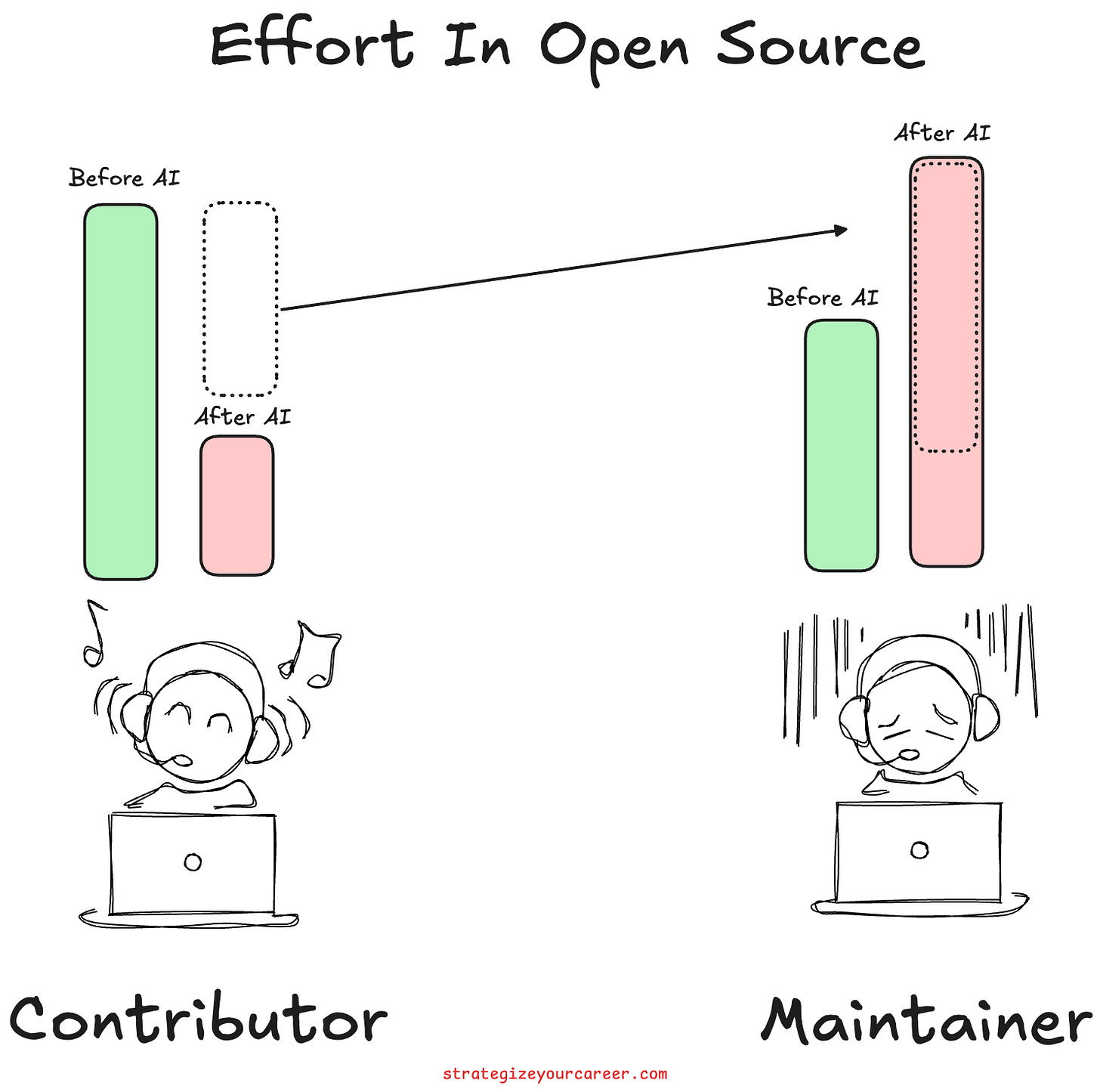

AI made contributions cheap, but maintainer review became more expensive

Generating a diff is cheaper now. Understanding the system is not.

That is the core economics of AI open source. A low-quality AI pull request transfers work from the contributor to the maintainer. The contributor saves time by generating the code. The maintainer pays that time back with interest by checking correctness, style, tests, architecture, maintainability, compatibility, and intent.

The old model worked because there were more contributors than maintainers. This makes the high effort side of the equation distributed across many people. But now it’s the opposite, the higher effort part is on the side where fewer people are.

This is why demanding an open source maintainer to “just review it” is not a valid request. Reviewing unknown code is not a lightweight activity. A maintainer often has to reconstruct the spec, inspect the affected path, check whether the change fits project direction, think through edge cases, and decide whether to provide feedback to iterate or reject the contribution.

The scarce resource in open source was never raw code.

It was trusted human attention from maintainers.

The clearest framing I have seen came from a maintainer’s essay called I don’t want your PRs anymore. The author explains that even before LLMs, writing code was not always the main bottleneck. Understanding, design, and review were. AI made that imbalance more visible.

That matches my own experience. AI helps me when I use it after I have framed the problem. It hurts when I use it to skip framing. The maintainer version of that lesson is clear: maintainers should not be the first people to find your spec gaps during review.

The pressure shows up in five different places: platform volume, PR review load, contribution gates, security-report triage, and business risk.

Simplifying all of these into “open source is dying” misses the useful lesson. So let’s see each of these contributing factors

1/ How AI-amplified volume is changing open-source platforms

Some open-source projects are rethinking their dependency on GitHub and similar platforms because project infrastructure has become part of the trust model.

This is not only about where Git repositories live. It is about issues, pull requests, CI runs, Github actions minutes, bots, forks, maintainer workflow... As contribution volume is growing faster than maintainer capacity, platforms like GitHub are no longer a web interface. They must protect and decide how much noise reaches the maintainer.

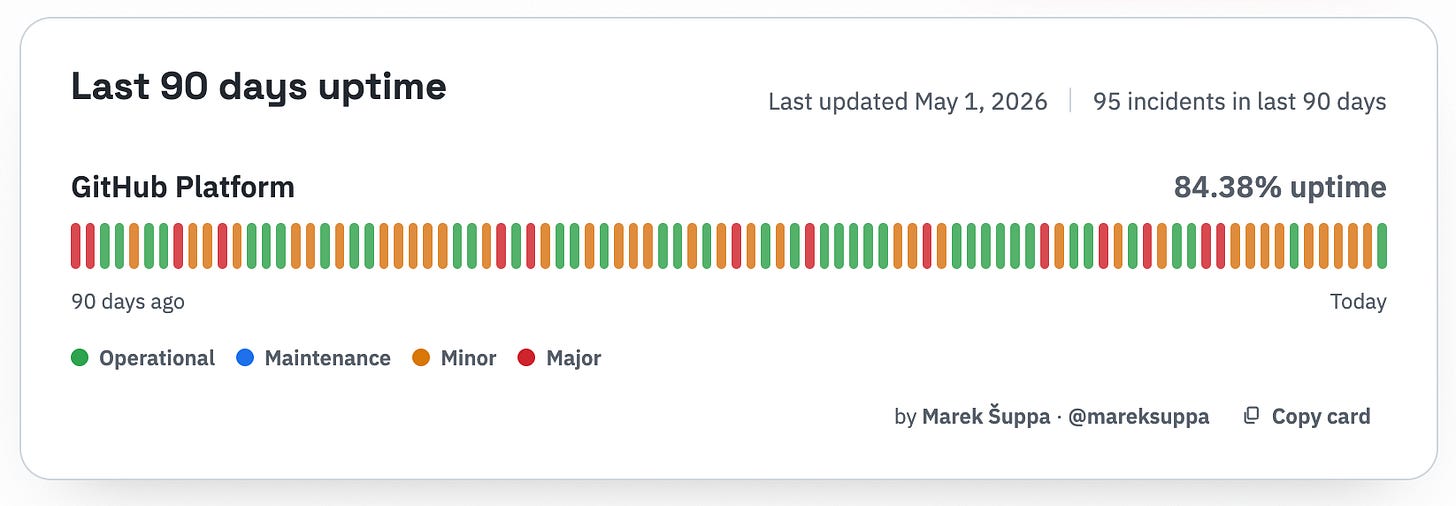

Ghostty is a good example of how platform pressure can be real without being an anti-open-source move. On April 28, 2026, Mitchell Hashimoto wrote that Ghostty is leaving GitHub. His stated reason was GitHub reliability and project workflow pain, especially around Actions and PR review. He also said Ghostty plans to keep a read-only mirror on GitHub while moving its working dependency elsewhere.

There are 2 parts here. First, when the platform is working as expected, it has pain points because it doesn’t protect the maintainer. Second, the platform is mostly not available because it can’t keep up with the increased traffic due to AI.

That distinction matters. Leaving GitHub is not the same as leaving open source. Some projects are leaving based on a governance decision, a reliability decision, an attention-management decision, or a combination of all.

The important split is not GitHub versus non-GitHub.

The important split is between uncontrolled attention and maintainable contribution flow.

As AI agents create more branches, PRs, issues, and automated interactions, platform choice becomes part of a project’s anti-spam and trust model. Some projects will stay on GitHub and add stricter contribution rules. Some will move to Codeberg, Forgejo, SourceHut, GitLab, or self-hosted systems. Some will keep mirrors and move the review elsewhere.

That is still open source.

It is just open source with a harder boundary around the maintainer's time.

2/ Why AI-generated PRs create a maintainer review crisis

AI-generated PRs are not bad because AI touched them.

They are bad when nobody understands or owns them.

Godot made this problem visible very early. In February 2026, Godot maintainers were overwhelmed by AI-generated pull requests, with Rémi Verschelde describing the work of identifying and sorting them as draining for maintainers. The article also noted that maintainers had to second-guess whether new contributors understood the code they were submitting.

That is the uncomfortable part. Open-source maintainers want to be welcoming. Many projects have spent years teaching new contributors, improving docs, labeling good first issues, and helping people get their first PR merged. AI changes the cost of that generosity.

The worst AI PR makes the maintainer become four people at once:

The spec writer, because the contributor did not define the problem clearly.

The tester, because the contributor did not prove that the change works.

The architect, because the contributor did not understand the boundaries.

The teacher, because the contributor cannot defend the generated code.

That is not collaboration. That’s doing the work yourself.

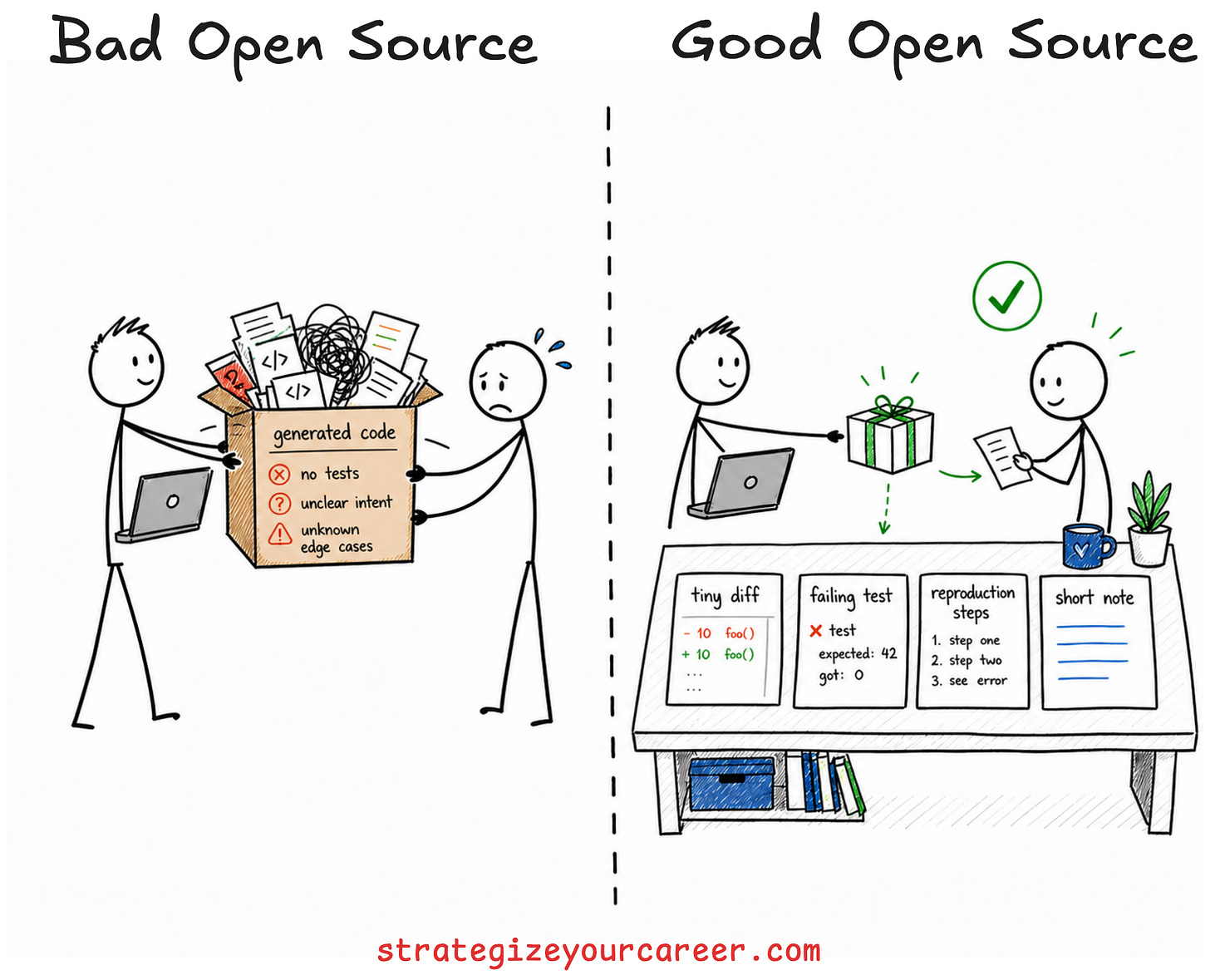

High-quality AI-assisted PRs still exist, but they look different. They are small. They are tied to a real issue, probably with an issue already open for it. They explain why the change belongs in the project. They include tests or reproduction steps. They respond to maintainer feedback with understanding instead of another AI-generated paragraph.

The difference is not AI versus human.

The difference is ownership versus transfer.

A useful next step is this breakdown of how context engineering helps software engineers get better AI coding results before asking maintainers to review anything:

3/ Why open-source contribution is becoming gated contribution

The code can remain public while the contribution path becomes stricter.

That is the shift many people miss. Open code and open maintainer attention are not the same thing. A project can let everyone read, fork, study, and modify the code while still requiring stricter rules before maintainers spend time on a PR, issue, or security report.

This can look like:

Requiring a linked, maintainer-approved issue before a PR.

Rejecting any PRs from unknown contributors and vague issues.

Limiting security reports to researchers with a reputation score.

Asking for smaller changes that can be reviewed independently.

Using invitation or maintainer sponsorship.

That may feel less open if you learned open source as “anyone can send anything.” But a healthier definition is “anyone can inspect, learn, fork, and propose, while maintainers decide how their limited attention is spent.”

An open source project doesn’t own anything to anyone. Someone decided to put the code in the open and let you use it and modify it, but they don’t have to accept your modifications

Everyone can read the code.

Not everyone gets to spend maintainer time.

That line sounds harsh until you have maintained something under pressure. If at work I’m getting paged at 3 am multiple times a week and someone external tries to put pressure because they want their feature added to my service, I wouldn’t think that I have to pay them attention, that I owe them that. There’s something more pressing for the project and the team right now

4/ How AI security reports are creating high-cost triage

AI security reports are uniquely expensive because the claim is cheap and the response is high stakes.

All reports will include “critical vulnerability” in their title. Validating that claim takes real security work. A maintainer has to check exploitability, affected versions, threat model, severity, etc.

curl is the sharpest public example. On January 26, 2026, Daniel Stenberg wrote that the curl bug-bounty program would officially stop on January 31, 2026. He said curl had seen an explosion in AI slop reports, and that the confirmed vulnerability rate fell from somewhere above 15% in previous years to below 5% starting in 2025.

That is a huge change in maintainer economics. The security team still has to treat reports seriously enough to avoid missing real vulnerabilities. Imagine the bad press when there’s a zero-day and someone calls them out because it was submitted but maintainers ignored it. But if most reports are false, vague, generated, or adversarial, the program becomes an attention sink for the maintainers.

Node.js shows a different response. In February 2026, the Node.js project updated its HackerOne program to require a HackerOne Signal score of 1.0 or higher for vulnerability reports. The Node.js security page now says HackerOne submissions require a minimum Signal score, with a direct steward path for people below the threshold.

That is not a blunt rejection of security research.

It is a filter.

And filters become more defensible when false positives rise.

The AI security-report problem is not just volume. It is incentives. A bounty creates a reason to stretch a weak finding into a scary claim to get paid more. AI makes the stretching cheaper. The maintainer pays the cost of disproving it.

This is why reputation gates are going to become normal. They are imperfect. They can exclude sincere new researchers. But the old model assumed report volume would remain human-scaled. That assumption is gone. The model is broken. No solution is perfect, and reputation filters look like the least bad.

5/ Why open-source companies are rethinking openness as business risk

Commercial open source has a different problem from volunteer maintenance.

For companies, AI can make cloning, scanning, support burden, exploit research, and fast-follow competition cheaper. That does not mean every company should close source. It does mean the risk calculation has changed.

Cal.com is a recent example. On April 15, 2026, Cal.com published a technical post explaining that it had moved its production codebase from a public repository to a private one. The public repository became Cal.diy, an open-source, self-hostable, community-driven version with commercial and enterprise features removed.

I would be careful with this category. Many licensing shifts predate modern coding agents. HashiCorp, Sentry, CockroachDB, and other companies had business-model pressure long before the current AI wave.

AI is not the only cause.

It is an accelerator.

It changes how fast competitors can inspect and imitate product behavior. It changes how quickly attackers can scan code for weak patterns. This definitely changes how leadership thinks about exposing production logic.

There are already many alternatives to open-sourcing part of your software. Some companies will respond with source-available licenses. Some will use closed-core models. Some will delay public releases. Some will split a community edition from a commercial product. Some will stay fully open because openness is still their strongest advantage.

The point is not that every closed-source move is justified by AI. Some will be business decisions using the security excuse, the same way many companies are laying off people with the excuse of AI.

The point is that AI gives companies another reason to revisit these decisions.

What a responsible AI-assisted open-source contribution looks like

The future contribution signal is judgment.

A responsible AI-assisted contribution starts before code. Code is the easy part now. Start with the issue, design constraint, reproduction, failing test, or maintainer-approved direction. If you cannot explain why the change belongs in the project, do not ask a model to write it.

Then keep the diff small. A small PR tells the maintainer, “I am respecting your attention.” A large generated PR from an unknown contributor says, “Please become responsible for my uncertainty.”

There are also contribution modes that are now more valuable than code, like:

Reproduce a bug.

Test a release candidate.

Review a design.

Confirm behavior across platforms.

AI can help with all of that if you use it as a thinking aid instead of a way to dodge responsibility.

The anti-pattern is to generate something nobody asked with AI and demand that someone pay attention to it.

This one is personal because I use AI every day. I do not want less AI in software engineering. I want more ownership around AI output.

The best AI-assisted contributor reduces the maintainer’s burden.

The worst one transfers it.

Read more about turning AI from a random code generator into a reliable teammate before you bring its output into shared engineering spaces:

When not to use AI for open-source contributions

Do not use AI when you cannot tell whether the answer is correct.

That sounds obvious, but it is the failure mode behind most of this mess. If you are new to a codebase, AI can help you read it. It can summarize files, find references, explain test setup, and help you ask better questions. But it should not become your substitute for understanding.

Do not use AI to write vulnerability reports unless you can reproduce the issue and explain exploitability yourself. Do not use AI to write a proposal if you cannot defend the tradeoffs. Do not use AI to submit a PR because a chatbot told you the project “should” have a feature.

There is a useful rule here:

If the maintainer asks, “Why did you do this?” and your honest answer is “The model suggested it,” you are not ready to submit.

Use AI to prepare. Use AI to inspect. Use AI to test your thinking.

But when you submit, you own the work.

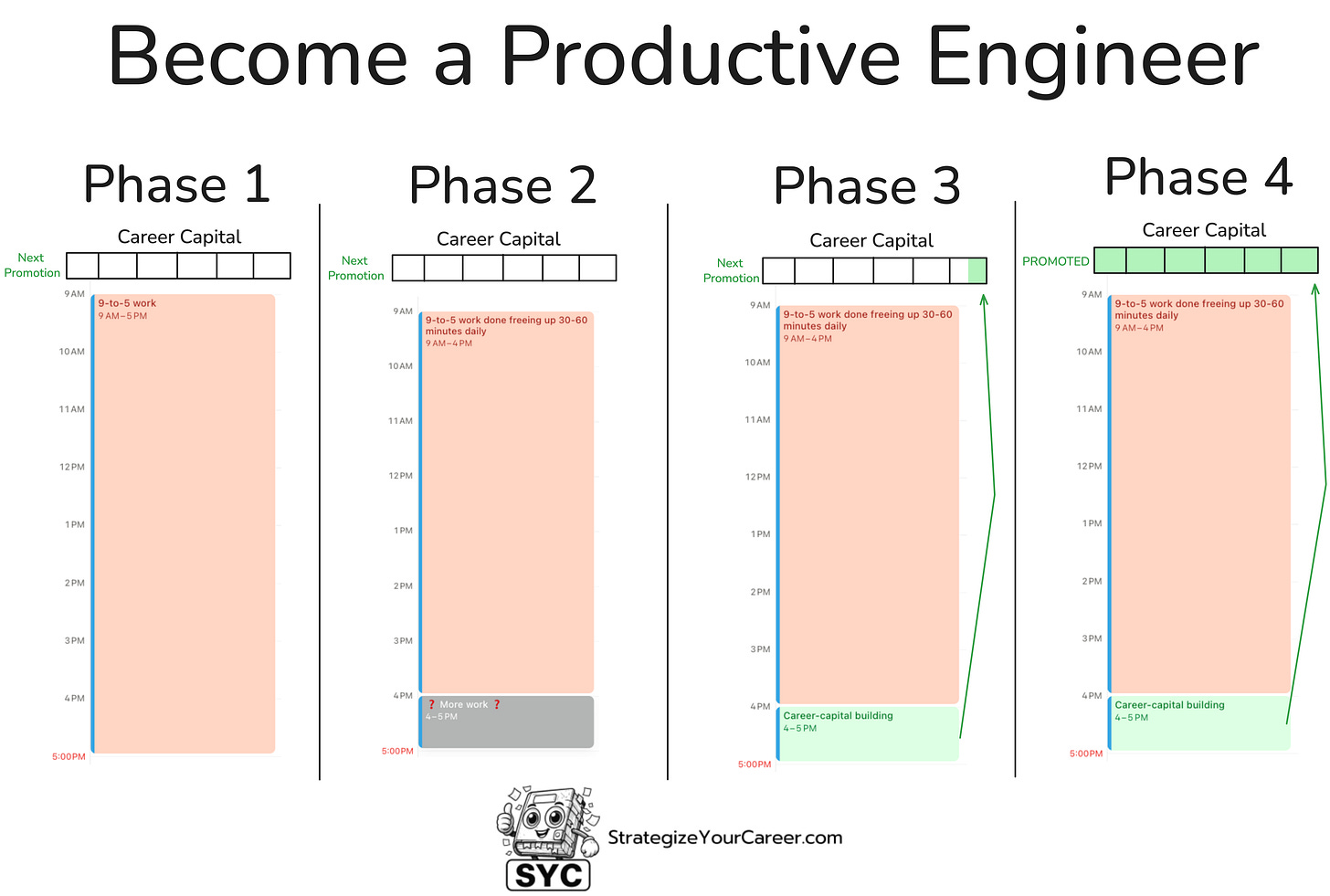

This is an article inside our system to move between phases 2 and 3. You’ll be able to build those XP points necessary for your next promotion if you master AI. It’s becoming an important criterion in promotions.

Check out the full system here.

Conclusion: The future of AI and open source is open code, gated attention

Open source is not disappearing.

But the default permission model around maintainer attention is changing.

AI made low-quality participation cheap enough that projects need stronger gates. Some will gate PRs. Some will gate security reports. Some will move platforms.

The old assumption was that contribution effort filtered intent.

That assumption is broken.

The new contribution signal is ownership: knowing what to change, knowing what not to change, proving the change works, and respecting the maintainer’s time.

Key Takeaways

AI is not killing open source, but it is forcing maintainers to protect attention more deliberately.

AI-generated PRs are harmful when contributors do not understand or own the code they submit.

Open-source projects can keep code public while gating PRs, issues, and vulnerability reports.

AI security reports are expensive because scary claims are easy to generate and hard to validate.

The best AI-assisted contributors use AI to reduce maintainer burden, not transfer it.

AI did not end open source.

It made open source admit that maintainer attention was never free.

Recap of the article

Is AI killing open-source software?

AI is not killing open-source software as a model, but it is changing how open-source projects protect maintainer time. The biggest problem is not code generation itself. The problem is that AI makes low-quality PRs, issues, and security reports cheap enough to overwhelm human review.

Are AI-generated PRs always bad?

No. AI-generated PRs can be useful when the contributor understands the change, keeps it small, tests it, and can respond to review. They become harmful when the contributor sends generated code they cannot explain and leaves the maintainer to find the real requirements.

Should open-source maintainers ban AI-generated code?

Some projects may ban AI-generated code, but a blanket ban is not the only option. Many projects will do better with contribution rules that require ownership, tests, disclosure when requested, small diffs, and linked issues before implementation.

Why are open-source projects gating contributions if the code is public?

Public code means anyone can read, fork, and study the project. It does not mean everyone is entitled to unlimited maintainer attention. Contribution gates help projects keep review, security triage, and support channels usable.

Should developers disclose AI use in pull requests?

Developers should disclose AI use when the project asks for it. Even when disclosure is not required, the contributor should own the output fully, including tests, reasoning, tradeoffs, and follow-up changes.

If you want to go deeper on writing better AI instructions before you ask a model to touch real code, read Prompt Engineering vs Spec Engineering next.