Context Engineering Guide for Software Engineers

How context engineering techniques like RAG, system prompts, web search, and MCP tools create a big impact, backed by experience. I share my context-engineered code review process.

Get the free AI Agent Building Blocks ebook when you subscribe:

Everyone is waiting for the next exponential leap. The arrival of GPT-5 showed we are not making exponential improvements or reaching a super-intelligent model soon. The jump from one version to the next is gradual. The engineers getting ahead are not just swapping today’s model for tomorrow’s slightly smarter one.

The real performance bottleneck for companies isn’t the model’s intelligence. It’s the quality of the context you give it. Context engineering is the practice of designing the entire information ecosystem around your AI, selecting, structuring, and delivering the right data at the right time so the model produces accurate, useful output. Stop waiting for a smarter AI and start building the systems that give you a 10x career leap today.

Companies reward force multipliers, not solo coders. Context engineering lets you automate repeated cognitive work, reduce review friction, and own outcomes that move the business forward.

In this post, you'll learn

Why context engineering is a high-leverage skill for software engineers

The 9 context engineering techniques that make AI output accurate and useful

How to apply context engineering to a real code review workflow (with example)

How to turn context engineering results into promotion-ready evidence

What Is Context Engineering?

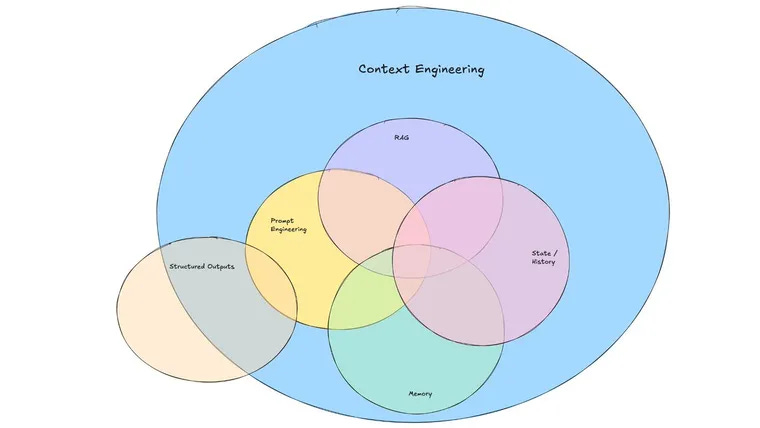

Context engineering is the process of designing, selecting, and structuring the information you feed to an AI model so it produces accurate, relevant output for your specific task. Unlike basic prompt engineering, which focuses on how you phrase a single question, context engineering is about the entire system: what data the model sees, in what format, from which sources, and at what point in the workflow.

In practice, context engineering combines techniques like RAG (Retrieval Augmented Generation), system prompts, tool integrations (MCP), memory patterns, and workflow orchestration into a repeatable pipeline. The goal is to close the gap between what the model knows (its training data) and what it needs to know (your company’s codebase, standards, and business logic).

Why Context Engineering Is the Skill to Focus On

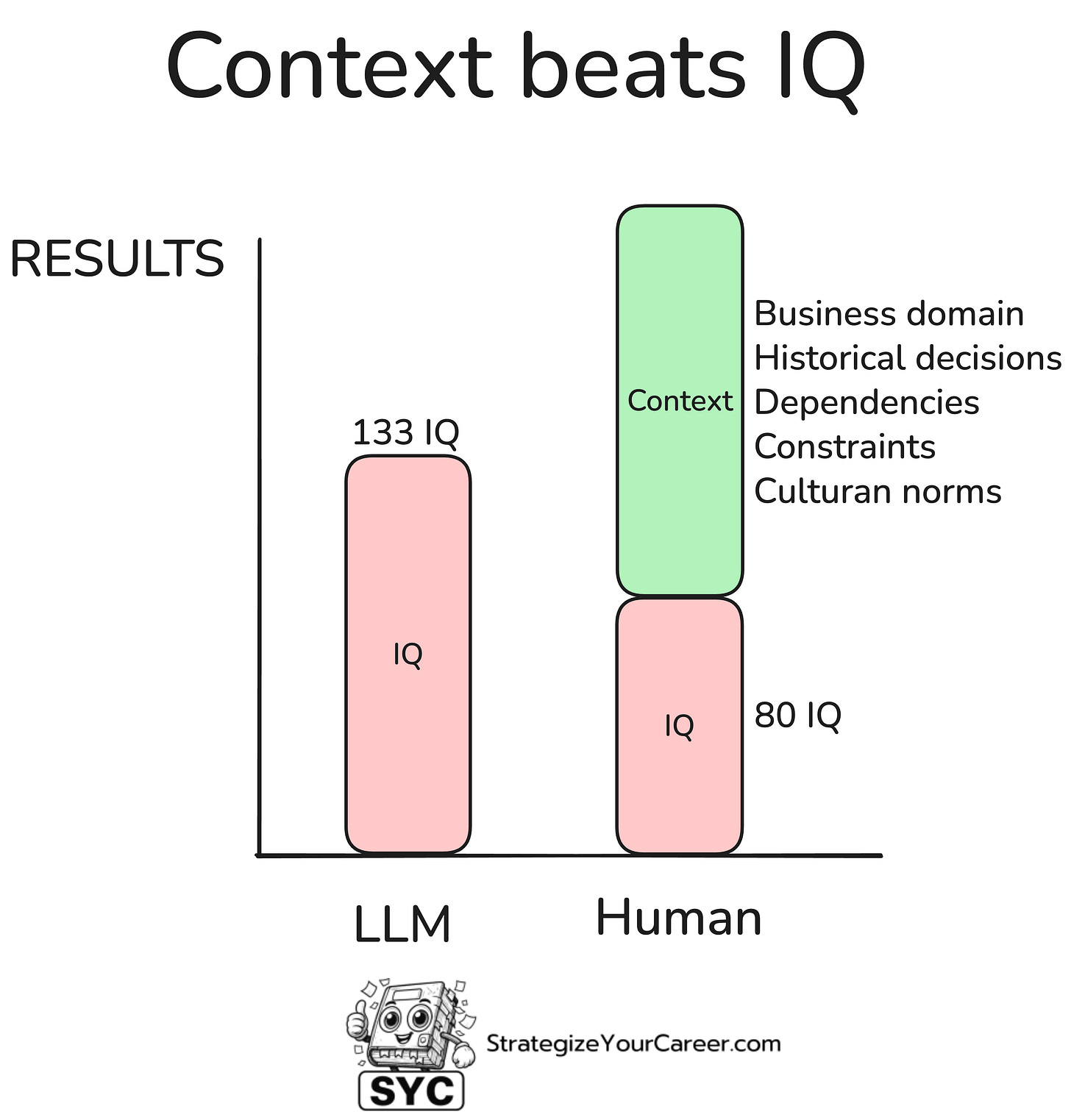

Even the best LLM has zero awareness of your company’s architecture, business rules, or team culture. It does not know your microservices, your coding standards, or the historical context from a six-month-old Slack thread. Without this, its output will be generic and wrong in subtle ways.

Imagine this: You've been at your company for three years, and now a new hire comes in with higher IQ. You'll outperform this new hire on its first day. You ship faster because you know the approval flows and hidden dependencies. You prevent regressions because you remember past incidents and edge cases. You reduce cycle time because you know exactly where to get answers without waiting. You navigate cross-team dependencies because you know who to involve and when.

You do not create impact in your team because you are the smartest engineer. You create impact because you understand the business domain.

The same applies to AI. A model without context is like a new hire without onboarding. Feed it the same environment you operate in and it becomes a high-speed extension of your brain. That is the essence of context engineering, giving AI the same insider advantage you have.

9 Context Engineering Techniques That Improve AI Output

Context engineering isn't “just prompting better.” It is using a set of repeatable techniques to give the AI the right information, in the right structure, at the right time.

1. System instructions: role and persona prompting Set a clear role or persona for the model before it responds. For example, "You are a principal software architect explaining event-driven systems to a mid-level engineer". This shapes the tone, detail, and perspective of the response.

2. Retrieval Augmented Generation (RAG) Combine the model with external knowledge sources that you put in a vector DB. Instead of relying solely on the model's internal data, retrieve relevant documents and feed them into the prompt so answers are accurate and current. You don't need a PhD for this, this vectors DB can be a PostgreSQL with the pgvector extension.

3. Short and long term memory patterns Use different memory scopes intentionally. Short term memory is the conversation context within this chat. Long term memory, like ChatGPT's persistent memory, retains facts and preferences over time for personalization.

4. Web Search Grounding Enhance answers with real-time search results. Ask the model to search for the latest information, then ground its reasoning in that retrieved data.