What's New in MCP in 2026: Model Context Protocol Status, Updates & Tasks

The complete MCP status report for 2026: Streamable HTTP, Tool Annotations, MCP Tasks, and solving the context window crisis for AI agents.

Get the free AI Agent Building Blocks ebook when you subscribe:

Setting up a new AI agent often feels like building a custom bridge for every single stream you need to cross.

I’ve plugged different APIs and scripts to LLMs to do operations. This is a common story for software engineers today who find themselves repeating the same integration patterns for every new project or data source.

Before November 2024, the industry suffered from an integration problem: every model required connectors for every enterprise data source.

The industry needed a standard. The Model Context Protocol (MCP), introduced by Anthropic and now governed by the Linux Foundation’s Agentic AI Foundation, established an open-source communication layer that collapses this integration matrix from M*N (M LLMs, N connectors) into a standardized framework.

I wrote here about an AI tool I built. I did that one with an MCP server. In this case, I was up to date with what was new, but I definitely didn’t know how to use it.

Also, there have been a lot of changes to the MCP protocol, so it’s worth diving deep into it.

In this post, you’ll learn

How MCP evolved from a simple data-fetching protocol into an enterprise-grade autonomous framework.

The core architecture of the client-host-server model built on JSON-RPC 2.0.

How asynchronous Tasks and Agentic Sampling are shifting orchestration to the server.

The technical mechanics of interactive MCP Apps and client-side Web MCP.

How Zero-Trust security (CIMD and XAA) handles identity at enterprise scale.

MCP Model Context Protocol Status in 2026

Since Anthropic first released MCP in November 2024, the protocol has evolved rapidly. Here’s the current status as of 2026:

What changed in the March 2025 update:

Streamable HTTP replaced Server-Sent Events (SSE) as the default transport. This is simpler to deploy, easier to debug, and works behind corporate proxies.

Audio Content Support was added, extending MCP beyond text and images.

Tool Annotations introduced safety guardrails. Servers can now mark tools as read-only or destructive, so hosts can enforce approval workflows.

What’s new in 2026:

MCP Tasks allow servers to run long-lived background operations and report progress. This is a shift from request-response to autonomous workflows.

Enterprise adoption is accelerating as companies standardize their AI agent infrastructure around MCP instead of building custom connectors.

The context window crisis is being addressed through Tool Search patterns and Lazy Loading, reducing token consumption from 70,000+ to manageable levels.

The protocol is no longer experimental. It’s becoming the standard communication layer between LLMs and enterprise data sources.

What Is MCP? The Model Context Protocol Explained

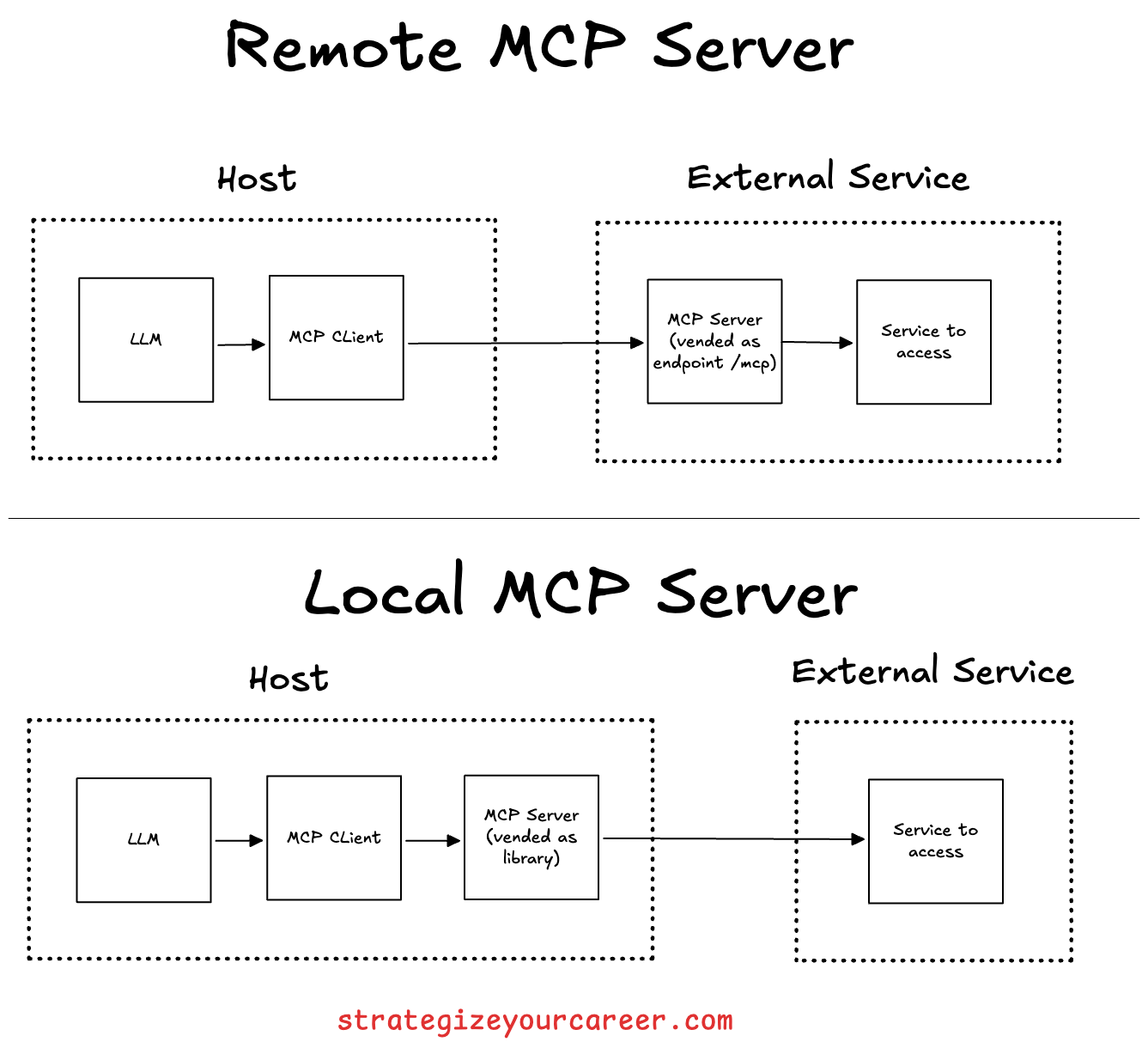

The protocol operates on a strict client-server architecture built upon JSON-RPC 2.0 messages, so it has stateful, bidirectional communication.

By separating the LLM reasoning from a programming language deterministic execution, MCP allows you to swap models or data sources without changing your underlying integration logic.

There are 3 parts:

Hosts: The user-facing LLM applications (e.g., Claude Desktop, Cursor, or specialized IDEs) that manage the agentic loop.

Clients: The internal routing connectors within the host that manage protocol connections.

Servers: The external services (Postgres, GitHub, Slack) exposing specific capabilities via standardized primitives.

The standard originally launched with three foundational primitives:

Tools are the executable functions that allow my agents to actually do things in the real world. They require explicit user approval for destructive actions, making them perfect for AI automation, but with some guardrails.

Resources act as read-only data sources that provide models with contextual information. They are URI-addressable, which means I can point an agent to application logs or real-time metrics.

Prompts are the structural templates to standardize how to interact with these models. This is a way to vend in the MCP server not only the functionality, but also how to use it.

MCP in Practice: How Hosts, Clients, and Servers Connect

I wrote here about the 10 steps to build an AI agent. One of them was plugging in MCPs.

In that example, I was doing some configuration changes configured in a Jira ticket and raising a PR. So one of the MCP servers I used was for Jira access, which meant I no longer had to copy-paste from Jira into my LLM of choice, but I just had to write “Implement Jira-1234“.

The Future of MCP: From Data Fetching to Autonomous AI Agent Workflows

It’s crazy to think we’ve been little more than a year with MCPs around. The initial release focused on basic connections, and I think that’s where everyone stopped reading about the protocol. But there’s more

The March 2025 update replaced brittle Server-Sent Events (SSE) with Streamable HTTP. This unified bidirectional communication over a single endpoint (/mcp), allowing enterprise WAFs to inspect payloads while supporting robust session resumability via the Mcp-Session-Id header.

Data modality also expanded to include Audio Content Support, allowing agents to interface directly with voice analysis and TTS APIs. To guarantee safety as tools became more powerful, Tool Annotations (readOnly and destructive) were introduced, programmatically obligating host applications to trigger human-in-the-loop (HITL) warnings before executing high-stakes actions like database deletions.

MCP for AI Agents: Enterprise Adoption in 2026

MCP for AI Agents: Enterprise Adoption in 2026

The biggest shift in MCP’s trajectory is enterprise adoption. In 2024, MCP was a developer tool. In 2026, it’s infrastructure.

Why enterprises are adopting MCP for AI agents:

The M×N problem is real at scale. A company with 5 LLM providers and 20 internal data sources would need 100 custom connectors without MCP. With MCP, each data source exposes one server, and every LLM connects through the same protocol. That’s 25 connectors instead of 100.

Security and compliance. Tool Annotations let enterprises enforce approval workflows before destructive actions. Read-only tools can run without human oversight; write tools require explicit approval. This maps directly to enterprise governance requirements.

Vendor independence. Because MCP is an open protocol (not tied to a single LLM provider), enterprises can swap models without rebuilding integrations. Your Postgres MCP server works the same whether the host is Claude, GPT, or an open-source model.

Agent orchestration. MCP Tasks enable agents to kick off long-running operations (data migrations, batch processing, multi-step workflows) and check back for results — a prerequisite for truly autonomous AI agent systems.

The companies moving fastest are those that have already standardized their internal APIs. If your services already expose clean REST or gRPC interfaces, wrapping them in MCP servers is straightforward.

Solving the MCP Context Window Crisis: Tool Search & Lazy Loading

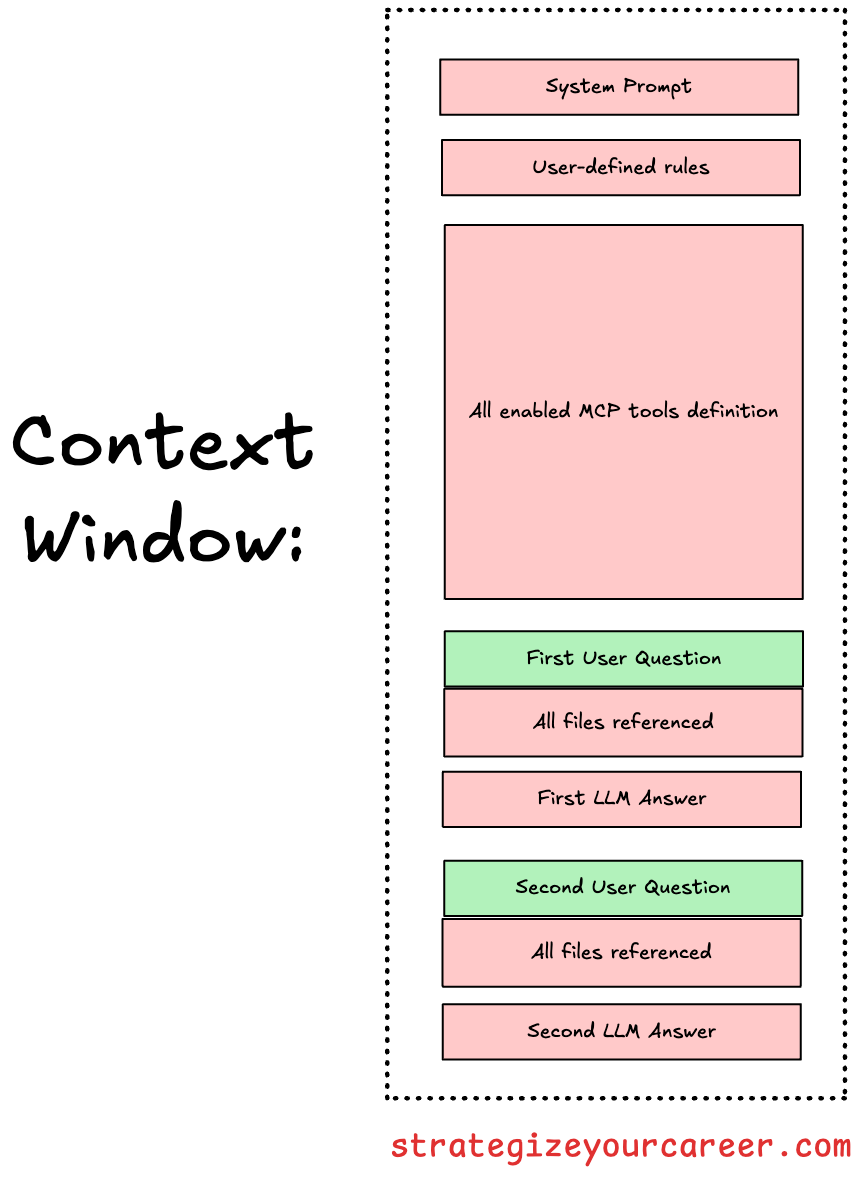

A critical bottleneck in early MCP integrations was context window pollution.

MCP tools are loaded at the top of your conversation with the LLM, same as the system prompt (in your IDE, these are the rules you define to always apply).

You may think that you’re consuming a small piece of the context window with a prompt like “explain @FileA and @FileB“, but here’s the reality:

Loading 50+ tool definitions could consume 70k+ tokens before a conversation even begins, leading to degraded reasoning.

MCP client implementations like Claude Code are resolving this with the Tool Search Tool pattern, where you only load a tool to search other tools, and implementing Lazy Loading. By setting defer_loading = true, the system withholds verbose schemas until the model expresses intent.

I think it’s just about time that it gets into the standard and everyone applies the same, similar to how “Agent Skills” are loaded on demand.