How a Senior Principal Engineer communicates at Amazon

Vague communication kills developer productivity. Master the 3-level framework and RFC 2119 to give precise instructions to both your team and your AI agents.

Get the free AI Agent Building Blocks ebook when you subscribe:

The difference between a junior developer and a senior leader often comes down to communication precision. Junior developers might know how to write code, but they often lack the organizational habits to scale their impact across a team.

This gap becomes obvious when we look at how different engineers interact with artificial intelligence. Treating an AI tool like a casual chat partner leads to a productivity loss. If you want to deliver your best work today, interacting with both humans and AI, you must know how to communicate to each of them.

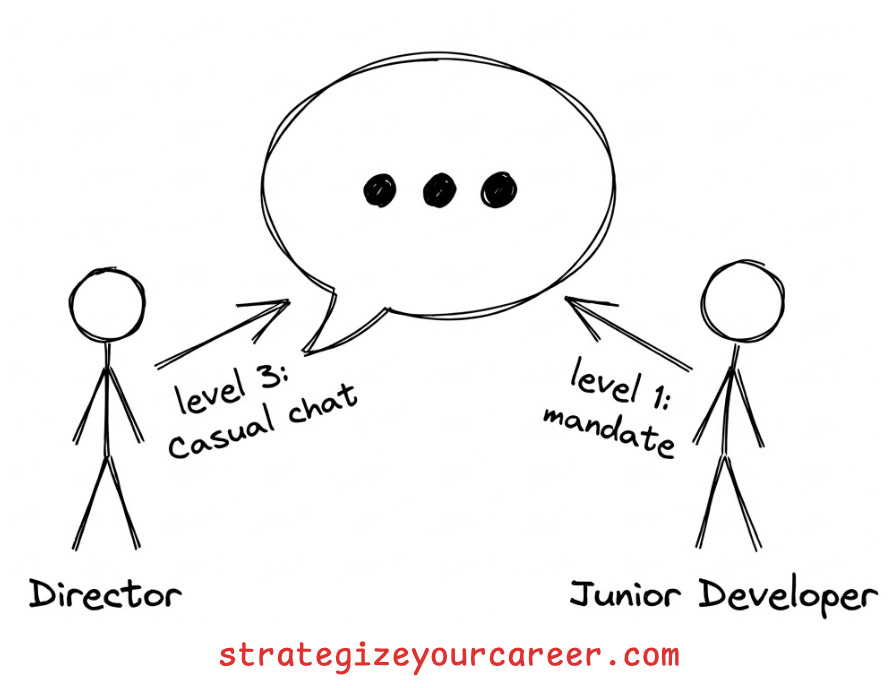

We can break down communication into three distinct levels of authority. I learned about this framework from Luu Tran, a Senior Principal Engineer working on Alexa at Amazon. This mental framework will change how you interact with both your colleagues and your AI agents.

In this post, you’ll learn:

How to categorize your feedback using Luu’s proven framework.

How to apply human communication styles to machine instructions.

Techniques to stop your coding assistant from blindly agreeing with bad ideas.

The 3 levels of engineering communication

The weight of your comments matters in a technical environment.

When a Senior Principal Engineer speaks, most people will take those words very seriously. If it’s a junior engineer instead, people would assume their level of confidence is lower due to their limited experience. This creates a need for a system that helps teams distinguish between mandatory changes and casual thoughts.

Level 1: Authoritative

Luu Tran defines a clear framework starting with the authoritative veto. This is your level one input, which acts as a non-negotiable directive based on deep expertise.

You use this level when you know your feedback applies directly to the core architecture or strict security constraints of a project. It leaves no room for debate and requires immediate compliance.

If the person didn’t accept the feedback, you’d escalate to your management chain or their management chain, and you’d ask other peers to jump in. In essence, you’d move heaven and earth to make sure they don’t make the mistake you are foreseeing.

Level 2: Talking from experience

The second tier is the advisory experience. This level of input offers guidance without enforcing a strict path.

As an experienced engineer, you’ve worked on many projects with many people, and you can see parallels between present situations and your past work.

You cannot be completely sure what the exact impact will be on their specific project, or you know that multiple options are good, so you offer it as a suggestion rather than a mandate.

You’d often end with “but YMMV“ (your mileage may vary)

Level 3: Talking as a user of the system

The third tier is the unverified opinion, which is casual feedback from a user perspective. It’s like thinking out loud. You are just another opinion.

You don’t intend your input to be used for any decision. You don’t want people to quote what you said. You just wanted to share a thought.

Most times, if you find yourself in a room with people who will take your words seriously and use them for decision-making, shut up. Use this level of talking only when you have very good communication with those people, and your words won’t have consequences.

The real problem: People misunderstand the level, and you misunderstand yourself

If there’s enough tenure and seniority, even with people at the same level, they can take your words as a commitment or the words from someone with deep experience.

Imagine a director of software engineering talking casually about the predictions of AI replacing 90% of the job in 2 years, and talking about starting to use AI in human resources. Then the HR director does layoffs, only to find that AI is not good enough yet.

These are things that happen because they didn’t understand the message at the same level

Most people will take any words from a Senior Principal engineer as a mandate, while taking any words from a junior as an opinion. Sure, the Senior Principal is most likely right, and the junior may have missed most of the complexity of the problem, but that may not happen 100% of the time.

If you can’t express which level you are coming from, as you grow in experience, you’d find yourself afraid to talk. Anything you say may be taken as a mandate, even if it was just a joke or an opinion. You will find you can’t think out loud or talk casually to brainstorm ideas. You end up being conservative, not taking any risk, not thinking outside the box.

That’s why it’s important that you understand which level you are coming from, that you communicate it clearly, and that people act according to the level of communication you’re using.

The framework only works if you know how to apply it to AI. Here’s exactly how.